Retrieval Recall¶

Module Interface¶

- class torchmetrics.retrieval.RetrievalRecall(empty_target_action='neg', ignore_index=None, top_k=None, aggregation='mean', **kwargs)[source]¶

Compute IR Recall.

Works with binary target data. Accepts float predictions from a model output.

As input to

forwardandupdatethe metric accepts the following input:preds(Tensor): A float tensor of shape(N, ...)target(Tensor): A long or bool tensor of shape(N, ...)indexes(Tensor): A long tensor of shape(N, ...)which indicate to which query a prediction belongs

As output to

forwardandcomputethe metric returns the following output:r@k(Tensor): A single-value tensor with the recall (attop_k) of the predictionspredsw.r.t. the labelstarget

All

indexes,predsandtargetmust have the same dimension and will be flatten at the beginning, so that for example, a tensor of shape(N, M)is treated as(N * M, ). Predictions will be first grouped byindexesand then will be computed as the mean of the metric over each query.- Parameters:

Specify what to do with queries that do not have at least a positive

target. Choose from:'neg': those queries count as0.0(default)'pos': those queries count as1.0'skip': skip those queries; if all queries are skipped,0.0is returned'error': raise aValueError

ignore_index¶ (

Optional[int]) – Ignore predictions where the target is equal to this number.top_k¶ (

Optional[int]) – Consider only the top k elements for each query (default: None, which considers them all)aggregation¶ (

Union[Literal['mean','median','min','max'],Callable]) –Specify how to aggregate over indexes. Can either a custom callable function that takes in a single tensor and returns a scalar value or one of the following strings:

'mean': average value is returned'median': median value is returned'max': max value is returned'min': min value is returned

kwargs¶ (

Any) – Additional keyword arguments, see Advanced metric settings for more info.

- Raises:

ValueError – If

empty_target_actionis not one oferror,skip,negorpos.ValueError – If

ignore_indexis not None or an integer.ValueError – If

top_kis notNoneor not an integer greater than 0.

Example

>>> from torch import tensor >>> from torchmetrics.retrieval import RetrievalRecall >>> indexes = tensor([0, 0, 0, 1, 1, 1, 1]) >>> preds = tensor([0.2, 0.3, 0.5, 0.1, 0.3, 0.5, 0.2]) >>> target = tensor([False, False, True, False, True, False, True]) >>> r2 = RetrievalRecall(top_k=2) >>> r2(preds, target, indexes=indexes) tensor(0.7500)

- plot(val=None, ax=None)[source]¶

Plot a single or multiple values from the metric.

- Parameters:

val¶ (

Union[Tensor,Sequence[Tensor],None]) – Either a single result from calling metric.forward or metric.compute or a list of these results. If no value is provided, will automatically call metric.compute and plot that result.ax¶ (

Optional[Axes]) – An matplotlib axis object. If provided will add plot to that axis

- Return type:

- Returns:

Figure and Axes object

- Raises:

ModuleNotFoundError – If matplotlib is not installed

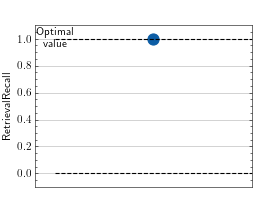

>>> import torch >>> from torchmetrics.retrieval import RetrievalRecall >>> # Example plotting a single value >>> metric = RetrievalRecall() >>> metric.update(torch.rand(10,), torch.randint(2, (10,)), indexes=torch.randint(2,(10,))) >>> fig_, ax_ = metric.plot()

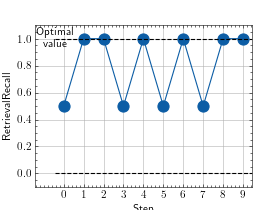

>>> import torch >>> from torchmetrics.retrieval import RetrievalRecall >>> # Example plotting multiple values >>> metric = RetrievalRecall() >>> values = [] >>> for _ in range(10): ... values.append(metric(torch.rand(10,), torch.randint(2, (10,)), indexes=torch.randint(2,(10,)))) >>> fig, ax = metric.plot(values)

Functional Interface¶

- torchmetrics.functional.retrieval.retrieval_recall(preds, target, top_k=None)[source]¶

Compute the recall metric for information retrieval.

Recall is the fraction of relevant documents retrieved among all the relevant documents.

predsandtargetshould be of the same shape and live on the same device. If notargetisTrue,0is returned.targetmust be either bool or integers andpredsmust befloat, otherwise an error is raised. If you want to measure Recall@K,top_kmust be a positive integer.- Parameters:

- Return type:

- Returns:

A single-value tensor with the recall (at

top_k) of the predictionspredsw.r.t. the labelstarget.- Raises:

ValueError – If

top_kparameter is not None or an integer larger than 0

Example

>>> from torchmetrics.functional import retrieval_recall >>> preds = tensor([0.2, 0.3, 0.5]) >>> target = tensor([True, False, True]) >>> retrieval_recall(preds, target, top_k=2) tensor(0.5000)