Expected Error Rate (EER)¶

Module Interface¶

- class torchmetrics.classification.EER(**kwargs)[source]¶

Compute Equal Error Rate (EER) for multiclass classification task.

\[\text{EER} = \frac{\text{FAR} + (1 - \text{FRR})}{2}, \text{where} \min_t abs(FAR_t-FRR_t)\]The Equal Error Rate (EER) is the point where the False Positive Rate (FPR) and True Positive Rate (TPR) are equal, or in practise minimized. A lower EER value signifies higher system accuracy.

This module is a simple wrapper to get the task specific versions of this metric, which is done by setting the

taskargument to either'binary','multiclass'or'multilabel'. See the documentation ofBinaryEER,MulticlassEERandMultilabelEERfor the specific details of each argument influence and examples.- Legacy Example:

>>> from torch import tensor >>> preds = tensor([0.13, 0.26, 0.08, 0.19, 0.34]) >>> target = tensor([0, 0, 1, 1, 1]) >>> eer = EER(task="binary") >>> eer(preds, target) tensor(0.5833)

>>> preds = tensor([[0.90, 0.05, 0.05], ... [0.05, 0.90, 0.05], ... [0.05, 0.05, 0.90], ... [0.85, 0.05, 0.10], ... [0.10, 0.10, 0.80]]) >>> target = tensor([0, 1, 1, 2, 2]) >>> eer = EER(task="multiclass", num_classes=3) >>> eer(preds, target) tensor([0.0000, 0.4167, 0.4167])

BinaryEER¶

- class torchmetrics.classification.BinaryEER(thresholds=None, ignore_index=None, validate_args=True, normalization='sigmoid', **kwargs)[source]¶

Compute Equal Error Rate (EER) for multiclass classification task.

\[\text{EER} = \frac{\text{FAR} + \text{FRR}}{2}, \text{where} \min_t abs(FAR_t-FRR_t)\]The Equal Error Rate (EER) is the point where the False Positive Rate (FPR) and True Positive Rate (TPR) are equal, or in practise minimized. A lower EER value signifies higher system accuracy.

As input to

forwardandupdatethe metric accepts the following input:preds(Tensor): A float tensor of shape(N, ...)containing probabilities or logits for each observation. If preds has values outside [0,1] range we consider the input to be logits and will auto apply sigmoid per element.target(Tensor): An int tensor of shape(N, ...)containing ground truth labels, and therefore only contain {0,1} values (except if ignore_index is specified). The value 1 always encodes the positive class.

As output to

forwardandcomputethe metric returns the following output:b_eer(Tensor): A single scalar with the eer score.

Additional dimension

...will be flattened into the batch dimension.The implementation both supports calculating the metric in a non-binned but accurate version and a binned version that is less accurate but more memory efficient. Setting the thresholds argument to None will activate the non-binned version that uses memory of size \(\mathcal{O}(n_{samples})\) whereas setting the thresholds argument to either an integer, list or a 1d tensor will use a binned version that uses memory of size \(\mathcal{O}(n_{thresholds})\) (constant memory).

- Parameters:

thresholds¶ (

Union[int,list[float],Tensor,None]) –Can be one of:

If set to None, will use a non-binned approach where thresholds are dynamically calculated from all the data. Most accurate but also most memory consuming approach.

If set to an int (larger than 1), will use that number of thresholds linearly spaced from 0 to 1 as bins for the calculation.

If set to an list of floats, will use the indicated thresholds in the list as bins for the calculation

If set to an 1d tensor of floats, will use the indicated thresholds in the tensor as bins for the calculation.

validate_args¶ (

bool) – bool indicating if input arguments and tensors should be validated for correctness. Set toFalsefor faster computations.kwargs¶ (

Any) – Additional keyword arguments, see Advanced metric settings for more info.

Example

>>> from torch import tensor >>> from torchmetrics.classification import BinaryEER >>> preds = tensor([0, 0.5, 0.7, 0.8]) >>> target = tensor([0, 1, 1, 0]) >>> metric = BinaryEER(thresholds=None) >>> metric(preds, target) tensor(0.5000) >>> b_eer = BinaryEER(thresholds=5) >>> b_eer(preds, target) tensor(0.7500)

- plot(val=None, ax=None)[source]¶

Plot a single or multiple values from the metric.

- Parameters:

val¶ (

Union[Tensor,Sequence[Tensor],None]) – Either a single result from calling metric.forward or metric.compute or a list of these results. If no value is provided, will automatically call metric.compute and plot that result.ax¶ (

Optional[Axes]) – An matplotlib axis object. If provided will add plot to that axis

- Return type:

- Returns:

Figure and Axes object

- Raises:

ModuleNotFoundError – If matplotlib is not installed

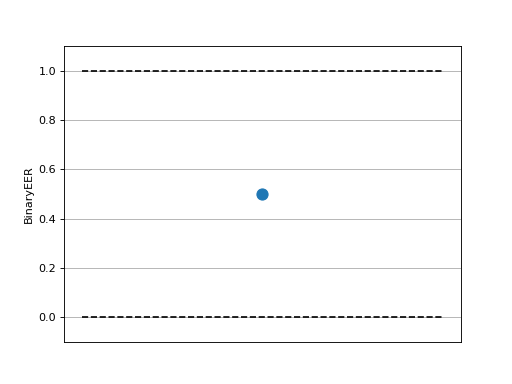

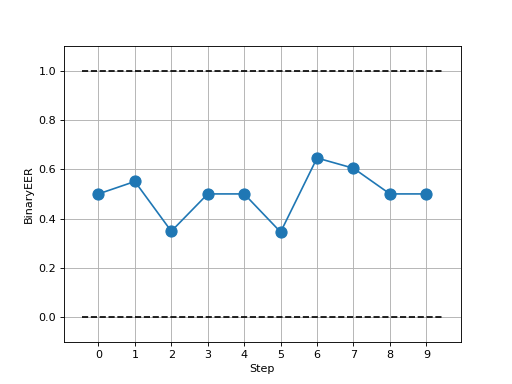

>>> # Example plotting a single >>> import torch >>> from torchmetrics.classification import BinaryEER >>> metric = BinaryEER() >>> metric.update(torch.rand(20,), torch.randint(2, (20,))) >>> fig_, ax_ = metric.plot()

>>> # Example plotting multiple values >>> import torch >>> from torchmetrics.classification import BinaryEER >>> metric = BinaryEER() >>> values = [ ] >>> for _ in range(10): ... values.append(metric(torch.rand(20,), torch.randint(2, (20,)))) >>> fig_, ax_ = metric.plot(values)

MulticlassEER¶

- class torchmetrics.classification.MulticlassEER(num_classes, thresholds=None, average=None, ignore_index=None, validate_args=True, **kwargs)[source]¶

Compute Equal Error Rate (EER) for multiclass classification task.

\[\text{EER} = \frac{\text{FAR} + (1 - \text{FRR})}{2}, \text{where} \min_t abs(FAR_t-FRR_t)\]The Equal Error Rate (EER) is the point where the False Positive Rate (FPR) and True Positive Rate (TPR) are equal, or in practise minimized. A lower EER value signifies higher system accuracy.

As input to

forwardandupdatethe metric accepts the following input:preds(Tensor): A float tensor of shape(N, C, ...)containing probabilities or logits for each observation. If preds has values outside [0,1] range we consider the input to be logits and will auto apply softmax per sample.target(Tensor): An int tensor of shape(N, ...)containing ground truth labels, and therefore only contain values in the [0, n_classes-1] range (except if ignore_index is specified).

As output to

forwardandcomputethe metric returns the following output:mc_eer(Tensor): If average=None then a 1d tensor of shape (n_classes, ) will be returned with eer score per class. If average=”macro”|”micro” then a single scalar will be returned.

Additional dimension

...will be flattened into the batch dimension.The implementation both supports calculating the metric in a non-binned but accurate version and a binned version that is less accurate but more memory efficient. Setting the thresholds argument to None will activate the non-binned version that uses memory of size \(\mathcal{O}(n_{samples})\) whereas setting the thresholds argument to either an integer, list or a 1d tensor will use a binned version that uses memory of size \(\mathcal{O}(n_{thresholds} \times n_{classes})\) (constant memory).

- Parameters:

num_classes¶ (

int) – Integer specifying the number of classesthresholds¶ (

Union[int,list[float],Tensor,None]) –Can be one of:

If set to None, will use a non-binned approach where thresholds are dynamically calculated from all the data. Most accurate but also most memory consuming approach.

If set to an int (larger than 1), will use that number of thresholds linearly spaced from 0 to 1 as bins for the calculation.

If set to an list of floats, will use the indicated thresholds in the list as bins for the calculation

If set to an 1d tensor of floats, will use the indicated thresholds in the tensor as bins for the calculation.

average¶ (

Optional[Literal['micro','macro']]) – If aggregation of curves should be applied. By default, the curves are not aggregated and a curve for each class is returned. If average is set to"micro", the metric will aggregate the curves by one hot encoding the targets and flattening the predictions, considering all classes jointly as a binary problem. If average is set to"macro", the metric will aggregate the curves by first interpolating the curves from each class at a combined set of thresholds and then average over the classwise interpolated curves. See averaging curve objects for more info on the different averaging methods.ignore_index¶ (

Optional[int]) – Specifies a target value that is ignored and does not contribute to the metric calculationvalidate_args¶ (

bool) – bool indicating if input arguments and tensors should be validated for correctness. Set toFalsefor faster computations.kwargs¶ (

Any) – Additional keyword arguments, see Advanced metric settings for more info.

Examples

>>> from torch import tensor >>> from torchmetrics.classification import MulticlassEER >>> preds = tensor([[0.75, 0.05, 0.05, 0.05, 0.05], ... [0.05, 0.75, 0.05, 0.05, 0.05], ... [0.05, 0.05, 0.75, 0.05, 0.05], ... [0.05, 0.05, 0.05, 0.75, 0.05]]) >>> target = tensor([0, 1, 3, 2]) >>> metric = MulticlassEER(num_classes=5, average="macro", thresholds=None) >>> metric(preds, target) tensor(0.4667) >>> mc_eer = MulticlassEER(num_classes=5, average=None, thresholds=None) >>> mc_eer(preds, target) tensor([0.0000, 0.0000, 0.6667, 0.6667, 1.0000])

- plot(val=None, ax=None)[source]¶

Plot a single or multiple values from the metric.

- Parameters:

val¶ (

Union[Tensor,Sequence[Tensor],None]) – Either a single result from calling metric.forward or metric.compute or a list of these results. If no value is provided, will automatically call metric.compute and plot that result.ax¶ (

Optional[Axes]) – An matplotlib axis object. If provided will add plot to that axis

- Return type:

- Returns:

Figure and Axes object

- Raises:

ModuleNotFoundError – If matplotlib is not installed

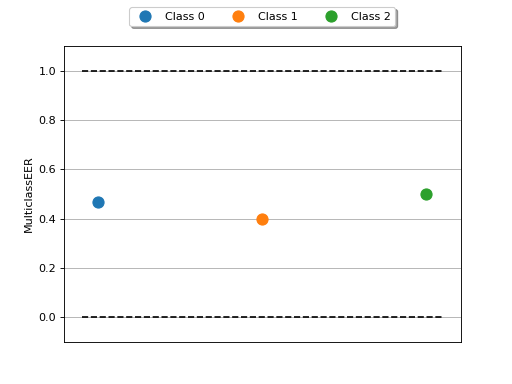

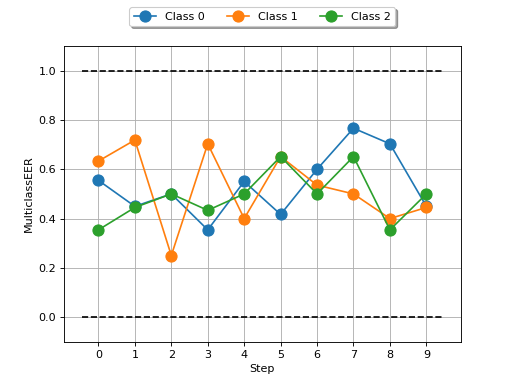

>>> # Example plotting a single >>> import torch >>> from torchmetrics.classification import MulticlassEER >>> metric = MulticlassEER(num_classes=3) >>> metric.update(torch.randn(20, 3), torch.randint(3,(20,))) >>> fig_, ax_ = metric.plot()

>>> # Example plotting multiple values >>> import torch >>> from torchmetrics.classification import MulticlassEER >>> metric = MulticlassEER(num_classes=3) >>> values = [ ] >>> for _ in range(10): ... values.append(metric(torch.randn(20, 3), torch.randint(3, (20,)))) >>> fig_, ax_ = metric.plot(values)

MultilabelEER¶

- class torchmetrics.classification.MultilabelEER(num_labels, thresholds=None, ignore_index=None, validate_args=True, **kwargs)[source]¶

Compute Equal Error Rate (EER) for multiclass classification task.

\[\text{EER} = \frac{\text{FAR} + (1 - \text{FRR})}{2}, \text{where} \min_t abs(FAR_t-FRR_t)\]The Equal Error Rate (EER) is the point where the False Positive Rate (FPR) and True Positive Rate (TPR) are equal, or in practise minimized. A lower EER value signifies higher system accuracy.

As input to

forwardandupdatethe metric accepts the following input:preds(Tensor): A float tensor of shape(N, C, ...)containing probabilities or logits for each observation. If preds has values outside [0,1] range we consider the input to be logits and will auto apply sigmoid per element.target(Tensor): An int tensor of shape(N, C, ...)containing ground truth labels, and therefore only contain {0,1} values (except if ignore_index is specified).

As output to

forwardandcomputethe metric returns the following output:ml_eer(Tensor): A 1d tensor of shape (n_classes, ) will be returned with eer score per label.

Additional dimension

...will be flattened into the batch dimension.The implementation both supports calculating the metric in a non-binned but accurate version and a binned version that is less accurate but more memory efficient. Setting the thresholds argument to None will activate the non-binned version that uses memory of size \(\mathcal{O}(n_{samples})\) whereas setting the thresholds argument to either an integer, list or a 1d tensor will use a binned version that uses memory of size \(\mathcal{O}(n_{thresholds} \times n_{labels})\) (constant memory).

- Parameters:

average¶ –

Defines the reduction that is applied over labels. Should be one of the following:

micro: Sum score over all labelsmacro: Calculate score for each label and average themweighted: calculates score for each label and computes weighted average using their support"none"orNone: calculates score for each label and applies no reduction

thresholds¶ (

Union[int,list[float],Tensor,None]) –Can be one of:

If set to None, will use a non-binned approach where thresholds are dynamically calculated from all the data. Most accurate but also most memory consuming approach.

If set to an int (larger than 1), will use that number of thresholds linearly spaced from 0 to 1 as bins for the calculation.

If set to an list of floats, will use the indicated thresholds in the list as bins for the calculation

If set to an 1d tensor of floats, will use the indicated thresholds in the tensor as bins for the calculation.

validate_args¶ (

bool) – bool indicating if input arguments and tensors should be validated for correctness. Set toFalsefor faster computations.kwargs¶ (

Any) – Additional keyword arguments, see Advanced metric settings for more info.

Example

>>> from torch import tensor >>> from torchmetrics.classification import MultilabelEER >>> preds = tensor([[0.75, 0.05, 0.35], ... [0.45, 0.75, 0.05], ... [0.05, 0.55, 0.75], ... [0.05, 0.65, 0.05]]) >>> target = tensor([[1, 0, 1], ... [0, 0, 0], ... [0, 1, 1], ... [1, 1, 1]]) >>> ml_eer = MultilabelEER(num_labels=3, thresholds=None) >>> ml_eer(preds, target) tensor([0.5000, 0.5000, 0.1667])

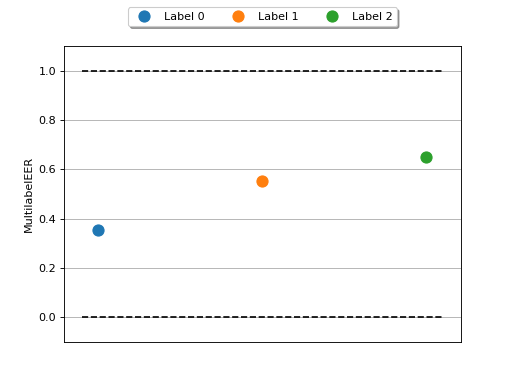

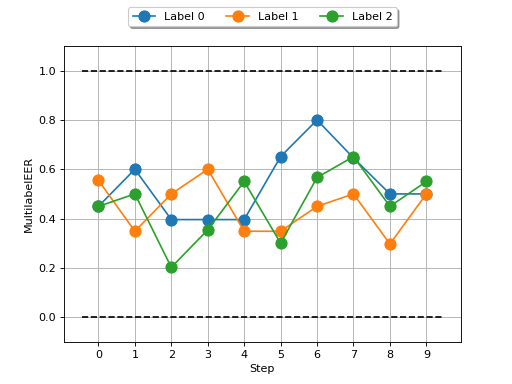

- plot(val=None, ax=None)[source]¶

Plot a single or multiple values from the metric.

- Parameters:

val¶ (

Union[Tensor,Sequence[Tensor],None]) – Either a single result from calling metric.forward or metric.compute or a list of these results. If no value is provided, will automatically call metric.compute and plot that result.ax¶ (

Optional[Axes]) – An matplotlib axis object. If provided will add plot to that axis

- Return type:

- Returns:

Figure and Axes object

- Raises:

ModuleNotFoundError – If matplotlib is not installed

>>> # Example plotting a single >>> import torch >>> from torchmetrics.classification import MultilabelEER >>> metric = MultilabelEER(num_labels=3) >>> metric.update(torch.rand(20,3), torch.randint(2, (20,3))) >>> fig_, ax_ = metric.plot()

>>> # Example plotting multiple values >>> import torch >>> from torchmetrics.classification import MultilabelEER >>> metric = MultilabelEER(num_labels=3) >>> values = [ ] >>> for _ in range(10): ... values.append(metric(torch.rand(20,3), torch.randint(2, (20,3)))) >>> fig_, ax_ = metric.plot(values)

Functional Interface¶

- torchmetrics.functional.classification.eer(preds, target, task, thresholds=None, num_classes=None, num_labels=None, average=None, ignore_index=None, validate_args=True)[source]¶

Compute Equal Error Rate (EER) metric.

This function is a simple wrapper to get the task specific versions of this metric, which is done by setting the

taskargument to either'binary','multiclass'or'multilabel'. See the documentation ofbinary_eer(),multiclass_eer()andmultilabel_eer()for the specific details of each argument influence and examples.- Parameters:

preds¶ (

Tensor) – Predictions from model (logits or probabilities)task¶ (

Literal['binary','multiclass','multilabel']) – Type of task, either ‘binary’, ‘multiclass’ or ‘multilabel’thresholds¶ (

Union[int,List[float],Tensor,None]) – Thresholds used for computing the ROC curvenum_classes¶ (

Optional[int]) – Number of classes (for multiclass task)num_labels¶ (

Optional[int]) – Number of labels (for multilabel task)average¶ (

Optional[Literal['micro','macro']]) – Method to average EER over multiple classes/labelsignore_index¶ (

Optional[int]) – Specify a target value that is ignoredvalidate_args¶ (

bool) – Bool indicating whether to validate input arguments

- Return type:

- Legacy Example:

>>> from torchmetrics.functional.classification import eer >>> preds = torch.tensor([0.13, 0.26, 0.08, 0.19, 0.34]) >>> target = torch.tensor([0, 0, 1, 1, 1]) >>> eer(preds, target, task='binary') tensor(0.5833)

>>> preds = torch.tensor([[0.90, 0.05, 0.05], ... [0.05, 0.90, 0.05], ... [0.05, 0.05, 0.90], ... [0.85, 0.05, 0.10], ... [0.10, 0.10, 0.80]]) >>> target = torch.tensor([0, 1, 1, 2, 2]) >>> eer(preds, target, task='multiclass', num_classes=3, ) tensor([0.0000, 0.4167, 0.4167])

binary_eer¶

- torchmetrics.functional.classification.binary_eer(preds, target, thresholds=None, ignore_index=None, validate_args=True)[source]¶

Compute Equal Error Rate (EER) for binary classification task.

\[\text{EER} = \frac{\text{FAR} + \text{FRR}}{2}, \text{where} \min_t abs(FAR_t-FRR_t)\]The Equal Error Rate (EER) is the point where the False Positive Rate (FPR) and True Positive Rate (TPR) are equal, or in practise minimized. A lower EER value signifies higher system accuracy.

- Parameters:

thresholds¶ (

Union[int,List[float],Tensor,None]) –Can be one of:

If set to None, will use a non-binned approach where thresholds are dynamically calculated from all the data. Most accurate but also most memory consuming approach.

If set to an int (larger than 1), will use that number of thresholds linearly spaced from 0 to 1 as bins for the calculation.

If set to an list of floats, will use the indicated thresholds in the list as bins for the calculation

If set to an 1d tensor of floats, will use the indicated thresholds in the tensor as bins for the calculation.

ignore_index¶ (

Optional[int]) – Specifies a target value that is ignored and does not contribute to the metric calculationvalidate_args¶ (

bool) – bool indicating if input arguments and tensors should be validated for correctness. Set toFalsefor faster computations

- Return type:

- Returns:

A single scalar with the eer score

Example

>>> from torchmetrics.functional.classification import binary_eer >>> preds = torch.tensor([0, 0.5, 0.7, 0.8]) >>> target = torch.tensor([0, 1, 1, 0]) >>> binary_eer(preds, target, thresholds=None) tensor(0.5000) >>> binary_eer(preds, target, thresholds=5) tensor(0.7500)

multiclass_eer¶

- torchmetrics.functional.classification.multiclass_eer(preds, target, num_classes, thresholds=None, average=None, ignore_index=None, validate_args=True)[source]¶

Compute Equal Error Rate (EER) for multiclass classification task.

\[\text{EER} = \frac{\text{FAR} + (1 - \text{FRR})}{2}, \text{where} \min_t abs(FAR_t-FRR_t)\]The Equal Error Rate (EER) is the point where the False Positive Rate (FPR) and True Positive Rate (TPR) are equal, or in practise minimized. A lower EER value signifies higher system accuracy.

- Parameters:

num_classes¶ (

int) – Integer specifying the number of classesthresholds¶ (

Union[int,List[float],Tensor,None]) –Can be one of:

If set to None, will use a non-binned approach where thresholds are dynamically calculated from all the data. Most accurate but also most memory consuming approach.

If set to an int (larger than 1), will use that number of thresholds linearly spaced from 0 to 1 as bins for the calculation.

If set to an list of floats, will use the indicated thresholds in the list as bins for the calculation

If set to an 1d tensor of floats, will use the indicated thresholds in the tensor as bins for the calculation.

average¶ (

Optional[Literal['micro','macro']]) – If aggregation of should be applied. The aggregation is applied to underlying ROC curves. By default, eer is not aggregated and a score for each class is returned. If average is set to"micro", the metric will aggregate the curves by one hot encoding the targets and flattening the predictions, considering all classes jointly as a binary problem. If average is set to"macro", the metric will aggregate the curves by first interpolating the curves from each class at a combined set of thresholds and then average over the classwise interpolated curves. See averaging curve objects for more info on the different averaging methods.ignore_index¶ (

Optional[int]) – Specifies a target value that is ignored and does not contribute to the metric calculationvalidate_args¶ (

bool) – bool indicating if input arguments and tensors should be validated for correctness. Set toFalsefor faster computations.

- Return type:

- Returns:

If average=None|”none” then a 1d tensor of shape (n_classes, ) will be returned with eer score per class. If average=”macro”|”micro” then a single scalar is returned.

Example

>>> from torchmetrics.functional.classification import multiclass_eer >>> preds = torch.tensor([[0.75, 0.05, 0.05, 0.05, 0.05], ... [0.05, 0.75, 0.05, 0.05, 0.05], ... [0.05, 0.05, 0.75, 0.05, 0.05], ... [0.05, 0.05, 0.05, 0.75, 0.05]]) >>> target = torch.tensor([0, 1, 3, 2]) >>> multiclass_eer(preds, target, num_classes=5, average="macro", thresholds=None) tensor(0.4667) >>> multiclass_eer(preds, target, num_classes=5, average=None, thresholds=None) tensor([0.0000, 0.0000, 0.6667, 0.6667, 1.0000]) >>> multiclass_eer(preds, target, num_classes=5, average="macro", thresholds=5) tensor(0.4667) >>> multiclass_eer(preds, target, num_classes=5, average=None, thresholds=5) tensor([0.0000, 0.0000, 0.6667, 0.6667, 1.0000])

multilabel_eer¶

- torchmetrics.functional.classification.multilabel_eer(preds, target, num_labels, thresholds=None, ignore_index=None, validate_args=True)[source]¶

Compute Equal Error Rate (EER) for multilabel classification task.

\[\text{EER} = \frac{\text{FAR} + (1 - \text{FRR})}{2}, \text{where} \min_t abs(FAR_t-FRR_t)\]The Equal Error Rate (EER) is the point where the False Positive Rate (FPR) and True Positive Rate (TPR) are equal, or in practise minimized. A lower EER value signifies higher system accuracy.

- Parameters:

thresholds¶ (

Union[int,List[float],Tensor,None]) –Can be one of:

If set to None, will use a non-binned approach where thresholds are dynamically calculated from all the data. Most accurate but also most memory consuming approach.

If set to an int (larger than 1), will use that number of thresholds linearly spaced from 0 to 1 as bins for the calculation.

If set to an list of floats, will use the indicated thresholds in the list as bins for the calculation

If set to an 1d tensor of floats, will use the indicated thresholds in the tensor as bins for the calculation.

ignore_index¶ (

Optional[int]) – Specifies a target value that is ignored and does not contribute to the metric calculationvalidate_args¶ (

bool) – bool indicating if input arguments and tensors should be validated for correctness. Set toFalsefor faster computations.

- Return type:

- Returns:

A 1d tensor of shape (n_classes, ) will be returned with eer score per label.

Example

>>> from torchmetrics.functional.classification import multilabel_eer >>> preds = torch.tensor([[0.75, 0.05, 0.35], ... [0.45, 0.75, 0.05], ... [0.05, 0.55, 0.75], ... [0.05, 0.65, 0.05]]) >>> target = torch.tensor([[1, 0, 1], ... [0, 0, 0], ... [0, 1, 1], ... [1, 1, 1]]) >>> multilabel_eer(preds, target, num_labels=3, thresholds=None) tensor([0.5000, 0.5000, 0.1667]) >>> multilabel_eer(preds, target, num_labels=3, thresholds=5) tensor([0.5000, 0.7500, 0.1667])