Char Error Rate¶

Module Interface¶

- class torchmetrics.text.CharErrorRate(**kwargs)[source]¶

Character Error Rate (CER) is a metric of the performance of an automatic speech recognition (ASR) system.

This value indicates the percentage of characters that were incorrectly predicted. The lower the value, the better the performance of the ASR system with a CharErrorRate of 0 being a perfect score. Character error rate can then be computed as:

\[CharErrorRate = \frac{S + D + I}{N} = \frac{S + D + I}{S + D + C}\]- where:

\(S\) is the number of substitutions,

\(D\) is the number of deletions,

\(I\) is the number of insertions,

\(C\) is the number of correct characters,

\(N\) is the number of characters in the reference (N=S+D+C).

Compute CharErrorRate score of transcribed segments against references.

As input to

forwardandupdatethe metric accepts the following input:preds(str): Transcription(s) to score as a string or list of stringstarget(str): Reference(s) for each speech input as a string or list of strings

As output of

forwardandcomputethe metric returns the following output:cer(Tensor): A tensor with the Character Error Rate score

- Parameters:

kwargs¶ (

Any) – Additional keyword arguments, see Advanced metric settings for more info.

Examples

>>> from torchmetrics.text import CharErrorRate >>> preds = ["this is the prediction", "there is an other sample"] >>> target = ["this is the reference", "there is another one"] >>> cer = CharErrorRate() >>> cer(preds, target) tensor(0.3415)

- plot(val=None, ax=None)[source]¶

Plot a single or multiple values from the metric.

- Parameters:

val¶ (

Union[Tensor,Sequence[Tensor],None]) – Either a single result from calling metric.forward or metric.compute or a list of these results. If no value is provided, will automatically call metric.compute and plot that result.ax¶ (

Optional[Axes]) – An matplotlib axis object. If provided will add plot to that axis

- Return type:

- Returns:

Figure and Axes object

- Raises:

ModuleNotFoundError – If matplotlib is not installed

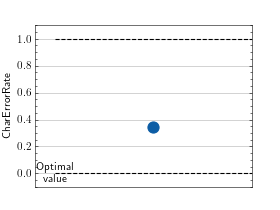

>>> # Example plotting a single value >>> from torchmetrics.text import CharErrorRate >>> metric = CharErrorRate() >>> preds = ["this is the prediction", "there is an other sample"] >>> target = ["this is the reference", "there is another one"] >>> metric.update(preds, target) >>> fig_, ax_ = metric.plot()

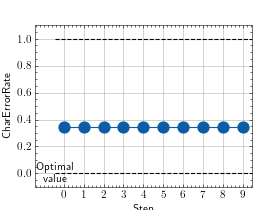

>>> # Example plotting multiple values >>> from torchmetrics.text import CharErrorRate >>> metric = CharErrorRate() >>> preds = ["this is the prediction", "there is an other sample"] >>> target = ["this is the reference", "there is another one"] >>> values = [ ] >>> for _ in range(10): ... values.append(metric(preds, target)) >>> fig_, ax_ = metric.plot(values)

Functional Interface¶

- torchmetrics.functional.text.char_error_rate(preds, target)[source]¶

Compute Character Error Rate used for performance of an automatic speech recognition system.

This value indicates the percentage of characters that were incorrectly predicted. The lower the value, the better the performance of the ASR system with a CER of 0 being a perfect score.

- Parameters:

- Return type:

- Returns:

Character error rate score

Examples

>>> preds = ["this is the prediction", "there is an other sample"] >>> target = ["this is the reference", "there is another one"] >>> char_error_rate(preds=preds, target=target) tensor(0.3415)