Researchers are hoping watermarking language models can help stop plagiarism while LAION and CarperAI are helping the public replicate ChatGPT on their own. Shutterstock’s generative AI platform is released and Zoom hopes its new chatbot will be a gamechanger for support teams. Let’s dive in!

Research Highlights:

- University of Maryland researchers proposed a framework for watermarking proprietary language models. Without access to the language model API or parameters, their watermark method claims to embed with little impact on text quality and to be detectable using an effective open-source algorithm. Their argument is that watermarking model output, or embedding signals into generated text that are invisible to humans but algorithmically detectable from a short span of tokens, can reduce the potential negative effects of large language models.

- Researchers proposed StyleGAN-T, a model that claims to addresses the specific requirements of large-scale text-to-image synthesis. According to its authors, StyleGAN-T addresses stable training on a variety of datasets, strong text alignment, and the tradeoff between controllable variation and text alignment. StyleGAN-T is claimed to perform faster and with higher sample quality than distilled diffusion models, which were the previous state-of-the-art in fast text-to-image synthesis.

- Researchers from Meta have developed a framework that uses 3D points to represent individual objects or entire scenes along with category-agnostic large-scale training from a variety of RGB-D videos. Understanding objects and scenes from a single image is a key objective of visual recognition, and 2D recognition has advanced greatly as a result of extensive learning and general-purpose representations. Comparatively, 3D presents fresh difficulties brought on by occlusions not visible in the image. By asking a 3D-aware decoder, this new model, called Multiview Compressive Coding (MCC), claims to compress the input appearance and geometry to predict the 3D structure.

ML Engineering Highlights

- Shutterstock announced the launch of its AI image generation platform. Their text-to-image technology claims to not need complex prompts to generate “breathtaking images” – claiming a single word is the only input needed. In addition to supporting over 20 languages, the company promises to pay artists for all their contributions.

- A final report outlining a three-year plan to create a National Artificial Intelligence Research Resource (NAIRR) was made public by the Biden White House. The NAIRR is intended to be a publicly accessible, shared infrastructure for AI research that will cost $2.6 billion over six years. The strategy calls for developing a “democratized” AI infrastructure that researchers and students can use over the course of four phases spread over three years. Access to both governmental and nongovernmental data sources will be made available.

- A new “intelligent conversational” live chat solution powered by AI was announced by Zoom. The Zoom Virtual Agent is designed with the goal of helping businesses provide efficient customer service in a cost-effective way, by reducing handle times for human customer support staff. The agent claims to be different than traditional, “rules-based” chatbots by “accurately interpret what customers or employees are asking, even if they use everyday language”.

Open Source Highlights

Open-source implementations of ChatGPT’s training algorithm were released by researchers from LAION and CarperAI called OpenAssistant and trlX. All the source code is publicly available on GitHub.

Tutorial of the Week

We recently optimized the way we serve Stable Diffusion to make it three times faster. Interested in running your own benchmarks, or using Lightning to get started with a production-ready Stable Diffusion endpoint? Learn more here!

Upcoming Event

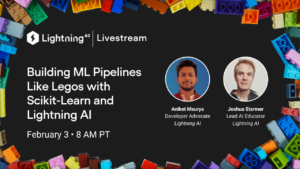

What do legos and training Scikit-learn models have in common? Join us for a livestream on Twitter/ LinkedIn next week to find out! Add to your calendar.

Community Spotlight

Want your work featured? Contact us on Discord or email us at [email protected]

- This repo provides two templates that begin to address problems you might have run into when trying to use Lightning for projects with multiple model and dataset files, as well as for tasks with fixed demand points like classification and super-resolution. Kudos to GitHub user Miracleyoo!

- PyTorch Keypoint Detection contains a Python package for 2D keypoint detection using Lightning and wandb. This package is currently being used in the AI and Robotics research group at Ghent University, and this repo includes several applications including detecting the corners of cardboard boxes in order to close that box with a robot.

- This Fine-Tuning Scheduler is an extension for PyTorch Lightning that enhances model experimentation with flexible fine-tuning schedules. It’s easy to use and offers several features that facilitate model research and exploration.

Kudos to another week of amazing work by our community!

Lightning AI Highlights

- Learn how to code a long short-term memory unit from scratch and then train it using the latest Jupyter Notebook from Josh Starmer’s StatQuest!

- Unit 4 of Sebastian Raschka’s Deep Learning Fundamentals course is now live! In this unit (Training Multilayer Neural Networks), you’ll learn how to train multilayer neural networks.

- The latest episode of The AI Buzz is now live! Check it out for a riveting conversation between Josh Starmer and Luca Antiga, Lightning’s CTO, about big data, reinforcement learning, and aligning models.

- Did you know you’ll receive $30USD of free cloud credits when you sign up for a Lightning account? Those credits can be used for any kind of cloud compute across the Lightning platform. Get ready to start building!

Don’t Miss the Submission Deadline

- SIGIR 2023: International ACM SIGIR Conference on Research and Development in Information Retrieval. Jul 23-27, 2023. (Taipei, Taiwan). Submission deadline: Wed Feb 01 2023 03:59:00 GMT-0800

- KDD 2023: Conference on knowledge discovery and data mining. (Long Beach, California). Submission deadline: Fri Feb 03 2023 03:59:59 GMT-0800

- IROS 2023 : International Conference on Intelligent Robots and Systems. Oct 1 – 5, 2023 (Detroit, Michigan). Submission deadline: March 1, 2023

- ICCV 2023: International Conference on Computer Vision. Oct 2 – 6, 2023. (Paris, France). 1. Submission deadline: March 8, 2023 23:59 GMT