Mean-Average-Precision (mAP)¶

Module Interface¶

- class torchmetrics.detection.mean_ap.MeanAveragePrecision(box_format='xyxy', iou_type='bbox', iou_thresholds=None, rec_thresholds=None, max_detection_thresholds=None, class_metrics=False, **kwargs)[source]

Compute the Mean-Average-Precision (mAP) and Mean-Average-Recall (mAR) for object detection predictions.

where

is the average precision for class

is the average precision for class  and

and  is the number of classes. The average

precision is defined as the area under the precision-recall curve. For object detection the recall and precision are

defined based on the intersection of union (IoU) between the predicted bounding boxes and the ground truth bounding

boxes e.g. if two boxes have an IoU > t (with t being some threshold) they are considered a match and therefore

considered a true positive. The precision is then defined as the number of true positives divided by the number of

all detected boxes and the recall is defined as the number of true positives divided by the number of all ground

boxes.

is the number of classes. The average

precision is defined as the area under the precision-recall curve. For object detection the recall and precision are

defined based on the intersection of union (IoU) between the predicted bounding boxes and the ground truth bounding

boxes e.g. if two boxes have an IoU > t (with t being some threshold) they are considered a match and therefore

considered a true positive. The precision is then defined as the number of true positives divided by the number of

all detected boxes and the recall is defined as the number of true positives divided by the number of all ground

boxes.As input to

forwardandupdatethe metric accepts the following input:preds(List): A list consisting of dictionaries each containing the key-values (each dictionary corresponds to a single image). Parameters that should be provided per dictboxes: (

FloatTensor) of shape(num_boxes, 4)containingnum_boxesdetection boxes of the format specified in the constructor. By default, this method expects(xmin, ymin, xmax, ymax)in absolute image coordinates.scores:

FloatTensorof shape(num_boxes)containing detection scores for the boxes.labels:

IntTensorof shape(num_boxes)containing 0-indexed detection classes for the boxes.masks:

boolof shape(num_boxes, image_height, image_width)containing boolean masks. Only required when iou_type=”segm”.

target(List) A list consisting of dictionaries each containing the key-values (each dictionary corresponds to a single image). Parameters that should be provided per dict:boxes:

FloatTensorof shape(num_boxes, 4)containingnum_boxesground truth boxes of the format specified in the constructor. By default, this method expects(xmin, ymin, xmax, ymax)in absolute image coordinates.labels:

IntTensorof shape(num_boxes)containing 0-indexed ground truth classes for the boxes.masks:

boolof shape(num_boxes, image_height, image_width)containing boolean masks. Only required when iou_type=”segm”.iscrowd:

IntTensorof shape(num_boxes)containing 0/1 values indicating whether the bounding box/masks indicate a crowd of objects. Value is optional, and if not provided it will automatically be set to 0.area:

FloatTensorof shape(num_boxes)containing the area of the object. Value if optional, and if not provided will be automatically calculated based on the bounding box/masks provided. Only affects which samples contribute to the map_small, map_medium, map_large values

As output of

forwardandcomputethe metric returns the following output:map_dict: A dictionary containing the following key-values:map: (

Tensor), global mean average precisionmap_small: (

Tensor), mean average precision for small objectsmap_medium:(

Tensor), mean average precision for medium objectsmap_large: (

Tensor), mean average precision for large objectsmar_1: (

Tensor), mean average recall for 1 detection per imagemar_10: (

Tensor), mean average recall for 10 detections per imagemar_100: (

Tensor), mean average recall for 100 detections per imagemar_small: (

Tensor), mean average recall for small objectsmar_medium: (

Tensor), mean average recall for medium objectsmar_large: (

Tensor), mean average recall for large objectsmap_50: (

Tensor) (-1 if 0.5 not in the list of iou thresholds), mean average precision at IoU=0.50map_75: (

Tensor) (-1 if 0.75 not in the list of iou thresholds), mean average precision at IoU=0.75map_per_class: (

Tensor) (-1 if class metrics are disabled), mean average precision per observed classmar_100_per_class: (

Tensor) (-1 if class metrics are disabled), mean average recall for 100 detections per image per observed classclasses (

Tensor), list of all observed classes

For an example on how to use this metric check the torchmetrics mAP example.

Note

mapscore is calculated with @[ IoU=self.iou_thresholds | area=all | max_dets=max_detection_thresholds ]. Caution: If the initialization parameters are changed, dictionary keys for mAR can change as well. The default properties are also accessible via fields and will raise anAttributeErrorif not available.Note

This metric utilizes the official pycocotools implementation as its backend. This means that the metric requires you to have pycocotools installed. In addition we require torchvision version 0.8.0 or newer. Please install with

pip install torchmetrics[detection].- Parameters:

box_format¶ (

Literal['xyxy','xywh','cxcywh']) –Input format of given boxes. Supported formats are:

’xyxy’: boxes are represented via corners, x1, y1 being top left and x2, y2 being bottom right.

’xywh’ : boxes are represented via corner, width and height, x1, y2 being top left, w, h being width and height. This is the default format used by pycoco and all input formats will be converted to this.

’cxcywh’: boxes are represented via centre, width and height, cx, cy being center of box, w, h being width and height.

iou_type¶ (

Literal['bbox','segm']) – Type of input (either masks or bounding-boxes) used for computing IOU. Supported IOU types are["bbox", "segm"]. If using"segm", masks should be provided in input.iou_thresholds¶ (

Optional[List[float]]) – IoU thresholds for evaluation. If set toNoneit corresponds to the stepped range[0.5,...,0.95]with step0.05. Else provide a list of floats.rec_thresholds¶ (

Optional[List[float]]) – Recall thresholds for evaluation. If set toNoneit corresponds to the stepped range[0,...,1]with step0.01. Else provide a list of floats.max_detection_thresholds¶ (

Optional[List[int]]) – Thresholds on max detections per image. If set to None will use thresholds[1, 10, 100]. Else, please provide a list of ints.class_metrics¶ (

bool) – Option to enable per-class metrics for mAP and mAR_100. Has a performance impact.kwargs¶ (

Any) – Additional keyword arguments, see Advanced metric settings for more info.

- Raises:

ModuleNotFoundError – If

pycocotoolsis not installedModuleNotFoundError – If

torchvisionis not installed or version installed is lower than 0.8.0ValueError – If

box_formatis not one of"xyxy","xywh"or"cxcywh"ValueError – If

iou_typeis not one of"bbox"or"segm"ValueError – If

iou_thresholdsis not None or a list of floatsValueError – If

rec_thresholdsis not None or a list of floatsValueError – If

max_detection_thresholdsis not None or a list of intsValueError – If

class_metricsis not a boolean

Example

>>> from torch import tensor >>> from torchmetrics.detection import MeanAveragePrecision >>> preds = [ ... dict( ... boxes=tensor([[258.0, 41.0, 606.0, 285.0]]), ... scores=tensor([0.536]), ... labels=tensor([0]), ... ) ... ] >>> target = [ ... dict( ... boxes=tensor([[214.0, 41.0, 562.0, 285.0]]), ... labels=tensor([0]), ... ) ... ] >>> metric = MeanAveragePrecision() >>> metric.update(preds, target) >>> from pprint import pprint >>> pprint(metric.compute()) {'classes': tensor(0, dtype=torch.int32), 'map': tensor(0.6000), 'map_50': tensor(1.), 'map_75': tensor(1.), 'map_large': tensor(0.6000), 'map_medium': tensor(-1.), 'map_per_class': tensor(-1.), 'map_small': tensor(-1.), 'mar_1': tensor(0.6000), 'mar_10': tensor(0.6000), 'mar_100': tensor(0.6000), 'mar_100_per_class': tensor(-1.), 'mar_large': tensor(0.6000), 'mar_medium': tensor(-1.), 'mar_small': tensor(-1.)}

- static coco_to_tm(coco_preds, coco_target, iou_type='bbox')[source]

Utility function for converting .json coco format files to the input format of this metric.

The function accepts a file for the predictions and a file for the target in coco format and converts them to a list of dictionaries containing the boxes, labels and scores in the input format of this metric.

- Parameters:

- Returns:

List of dictionaries containing the predictions in the input format of this metric target: List of dictionaries containing the targets in the input format of this metric

- Return type:

preds

Example

>>> # File formats are defined at https://cocodataset.org/#format-data >>> # Example files can be found at >>> # https://github.com/cocodataset/cocoapi/tree/master/results >>> from torchmetrics.detection import MeanAveragePrecision >>> preds, target = MeanAveragePrecision.coco_to_tm( ... "instances_val2014_fakebbox100_results.json.json", ... "val2014_fake_eval_res.txt.json" ... iou_type="bbox" ... )

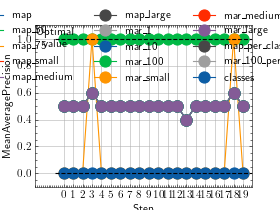

- plot(val=None, ax=None)[source]

Plot a single or multiple values from the metric.

- Parameters:

val¶ (

Union[Dict[str,Tensor],Sequence[Dict[str,Tensor]],None]) – Either a single result from calling metric.forward or metric.compute or a list of these results. If no value is provided, will automatically call metric.compute and plot that result.ax¶ (

Optional[Axes]) – An matplotlib axis object. If provided will add plot to that axis

- Return type:

- Returns:

Figure object and Axes object

- Raises:

ModuleNotFoundError – If matplotlib is not installed

>>> from torch import tensor >>> from torchmetrics.detection.mean_ap import MeanAveragePrecision >>> preds = [dict( ... boxes=tensor([[258.0, 41.0, 606.0, 285.0]]), ... scores=tensor([0.536]), ... labels=tensor([0]), ... )] >>> target = [dict( ... boxes=tensor([[214.0, 41.0, 562.0, 285.0]]), ... labels=tensor([0]), ... )] >>> metric = MeanAveragePrecision() >>> metric.update(preds, target) >>> fig_, ax_ = metric.plot()

>>> # Example plotting multiple values >>> import torch >>> from torchmetrics.detection.mean_ap import MeanAveragePrecision >>> preds = lambda: [dict( ... boxes=torch.tensor([[258.0, 41.0, 606.0, 285.0]]) + torch.randint(10, (1,4)), ... scores=torch.tensor([0.536]) + 0.1*torch.rand(1), ... labels=torch.tensor([0]), ... )] >>> target = [dict( ... boxes=torch.tensor([[214.0, 41.0, 562.0, 285.0]]), ... labels=torch.tensor([0]), ... )] >>> metric = MeanAveragePrecision() >>> vals = [] >>> for _ in range(20): ... vals.append(metric(preds(), target)) >>> fig_, ax_ = metric.plot(vals)

- tm_to_coco(name='tm_map_input')[source]

Utility function for converting the input for this metric to coco format and saving it to a json file.

This function should be used after calling .update(…) or .forward(…) on all data that should be written to the file, as the input is then internally cached. The function then converts to information to coco format a writes it to json files.

- Parameters:

name¶ (

str) – Name of the output file, which will be appended with “_preds.json” and “_target.json”- Return type:

Example

>>> from torch import tensor >>> from torchmetrics.detection import MeanAveragePrecision >>> preds = [ ... dict( ... boxes=tensor([[258.0, 41.0, 606.0, 285.0]]), ... scores=tensor([0.536]), ... labels=tensor([0]), ... ) ... ] >>> target = [ ... dict( ... boxes=tensor([[214.0, 41.0, 562.0, 285.0]]), ... labels=tensor([0]), ... ) ... ] >>> metric = MeanAveragePrecision() >>> metric.update(preds, target) >>> metric.tm_to_coco("tm_map_input")