What is a Strategy?¶

Strategy controls the model distribution across training, evaluation, and prediction to be used by the Trainer. It can be controlled by passing different

strategy with aliases ("ddp", "ddp_spawn", "deepspeed" and so on) as well as a custom strategy to the strategy parameter for Trainer.

The Strategy in PyTorch Lightning handles the following responsibilities:

Launch and teardown of training processes (if applicable).

Setup communication between processes (NCCL, GLOO, MPI, and so on).

Provide a unified communication interface for reduction, broadcast, and so on.

Owns the

LightningModuleHandles/owns optimizers and schedulers.

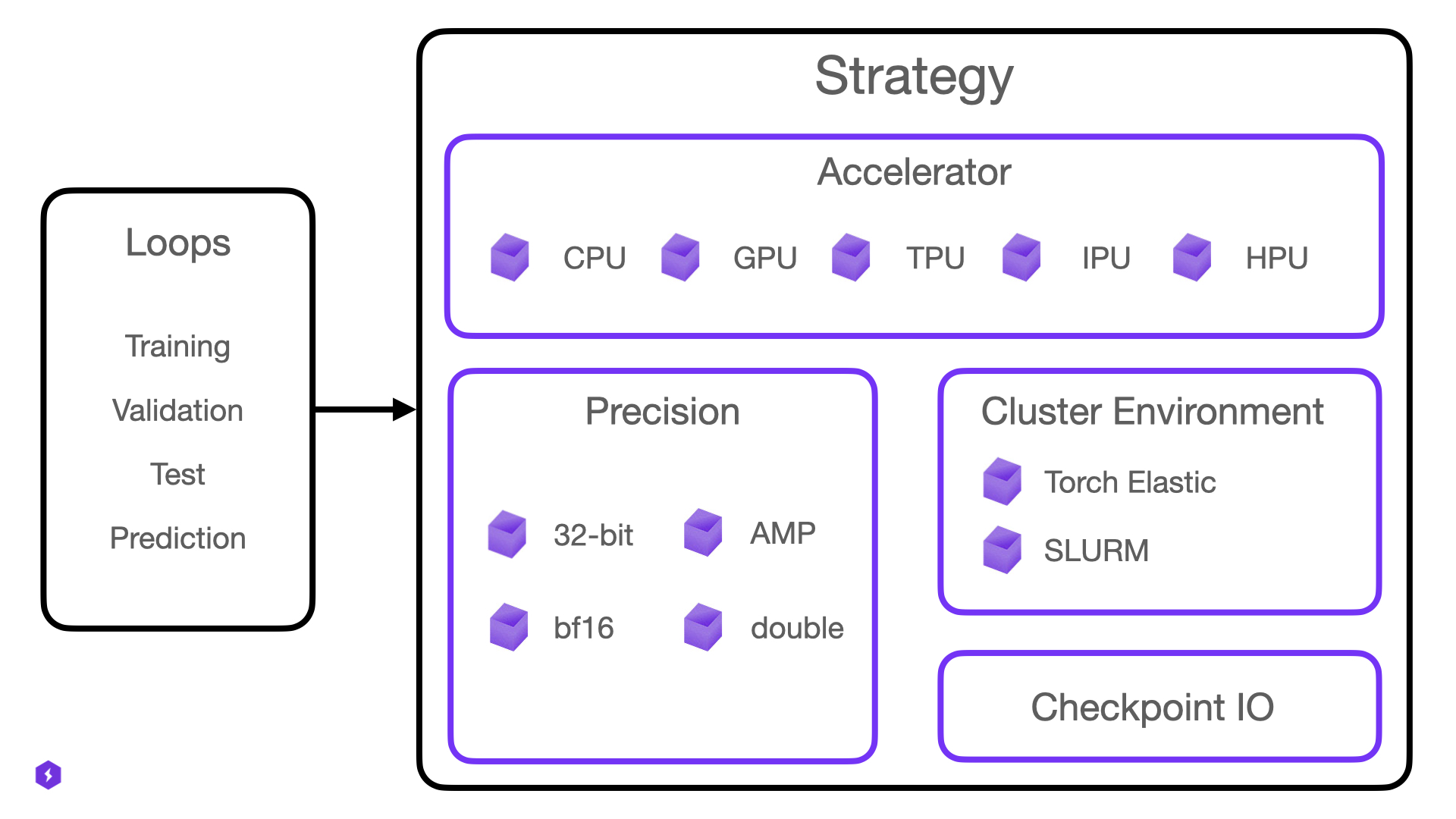

Strategy is a composition of one Accelerator, one Precision Plugin, a CheckpointIO plugin and other optional plugins such as the ClusterEnvironment.

We expose Strategies mainly for expert users that want to extend Lightning for new hardware support or new distributed backends (e.g. a backend not yet supported by PyTorch itself).

Selecting a Built-in Strategy¶

Built-in strategies can be selected in two ways.

Pass the shorthand name to the

strategyTrainer argumentImport a Strategy from

lightning.pytorch.strategies, instantiate it and pass it to thestrategyTrainer argument

The latter allows you to configure further options on the specific strategy. Here are some examples:

# Training with the DistributedDataParallel strategy on 4 GPUs

trainer = Trainer(strategy="ddp", accelerator="gpu", devices=4)

# Training with the DistributedDataParallel strategy on 4 GPUs, with options configured

trainer = Trainer(strategy=DDPStrategy(static_graph=True), accelerator="gpu", devices=4)

# Training with the DDP Spawn strategy using auto accelerator selection

trainer = Trainer(strategy="ddp_spawn", accelerator="auto", devices=4)

# Training with the DeepSpeed strategy on available GPUs

trainer = Trainer(strategy="deepspeed", accelerator="gpu", devices="auto")

# Training with the DDP strategy using 3 CPU processes

trainer = Trainer(strategy="ddp", accelerator="cpu", devices=3)

# Training with the DDP Spawn strategy on 8 TPU cores

trainer = Trainer(strategy="ddp_spawn", accelerator="tpu", devices=8)

The below table lists all relevant strategies available in Lightning with their corresponding short-hand name:

Name |

Class |

Description |

|---|---|---|

fsdp |

Strategy for Fully Sharded Data Parallel training. Learn more. |

|

ddp |

Strategy for multi-process single-device training on one or multiple nodes. Learn more. |

|

ddp_spawn |

Same as “ddp” but launches processes using |

|

deepspeed |

Provides capabilities to run training using the DeepSpeed library, with training optimizations for large billion parameter models. Learn more. |

|

xla |

Strategy for training on multiple TPU devices using the |

|

single_xla |

|

Strategy for training on a single XLA device, like TPUs. Learn more. |

Third-party Strategies¶

There are powerful third-party strategies that integrate well with Lightning but aren’t maintained as part of the lightning package.

Checkout the gallery over here.

Create a Custom Strategy¶

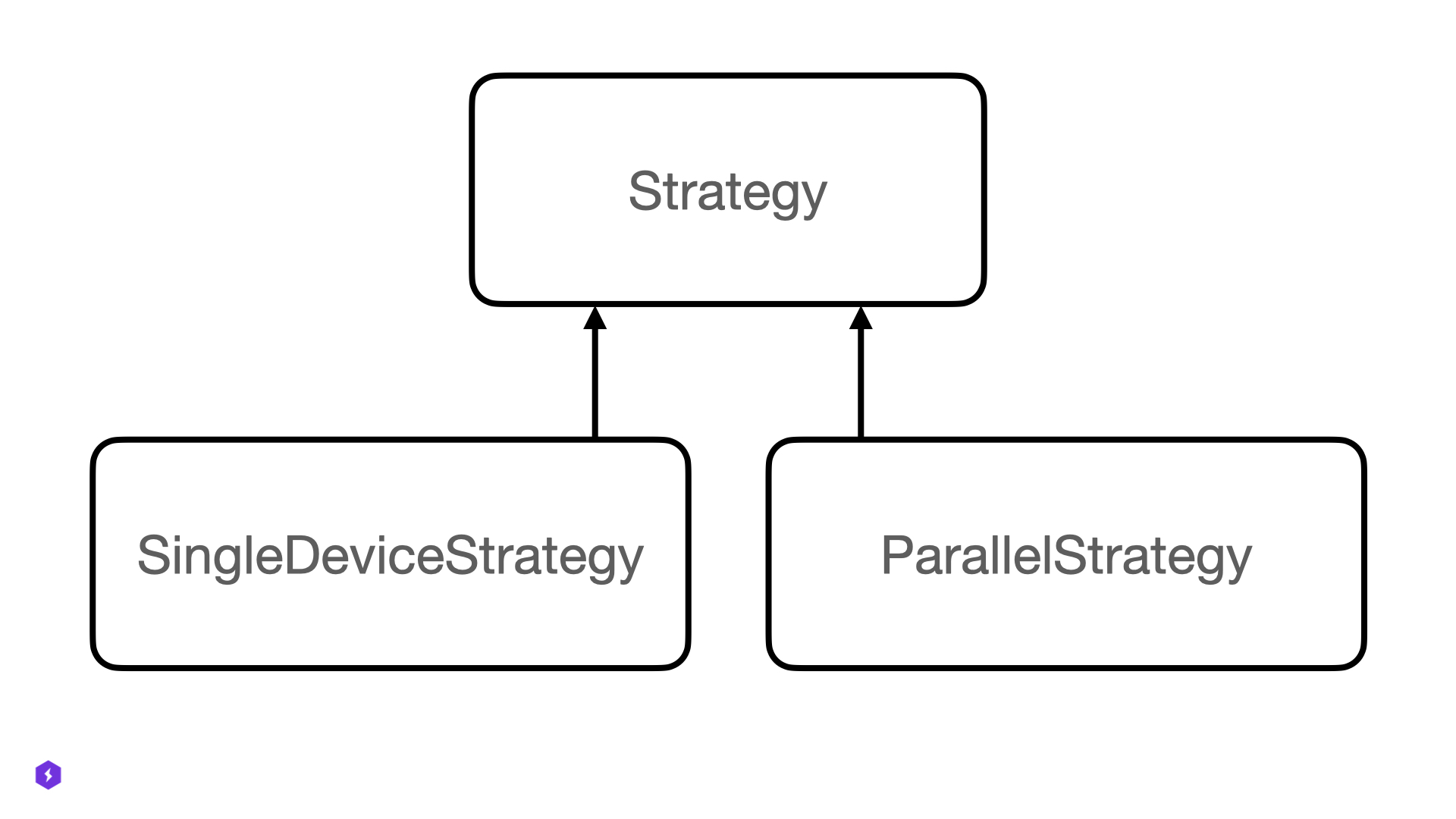

Every strategy in Lightning is a subclass of one of the main base classes: Strategy, SingleDeviceStrategy or ParallelStrategy.

As an expert user, you may choose to extend either an existing built-in Strategy or create a completely new one by subclassing the base classes.

from lightning.pytorch.strategies import DDPStrategy

class CustomDDPStrategy(DDPStrategy):

def configure_ddp(self):

self.model = MyCustomDistributedDataParallel(

self.model,

device_ids=...,

)

def setup(self, trainer):

# you can access the accelerator and plugins directly

self.accelerator.setup()

self.precision_plugin.connect(...)

The custom strategy can then be passed into the Trainer directly via the strategy parameter.

# custom strategy

trainer = Trainer(strategy=CustomDDPStrategy())

Since the strategy also hosts the Accelerator and various plugins, you can customize all of them to work together as you like:

# custom strategy, with new accelerator and plugins

accelerator = MyAccelerator()

precision_plugin = MyPrecisionPlugin()

strategy = CustomDDPStrategy(accelerator=accelerator, precision_plugin=precision_plugin)

trainer = Trainer(strategy=strategy)