The Age of Personal AI

Large language models (LLMs) have rapidly gained popularity across the globe. These models are trained to predict the next word in extensive datasets of raw text, exhibiting emergent abilities to complete tasks they were not specifically trained for (e.g., summarizing a sentence in Shakespearean style or playing the next move in a chess game). Furthermore, LLMs can learn complex tasks in-context and strategize to achieve goals without requiring updates to the model’s weights.

This advancement has unleashed a new generation of applications and use cases previously unattainable. Possessing vast amounts of data, computing power, or coding skills is no longer the sole key to achieving automation and building AI applications in numerous cases. The age of personal AI has dawned.

Many in the AI community have believed that scale was the only factor contributing to these emergent abilities, as there has been a rush to develop increasingly larger models. This perception would have limited access to these models, confining them behind closed APIs and placing them exclusively under the control of a select few. Additionally, the recent trend of ceasing to release details on the training processes of very large-scale models (arguably for safety reasons) has cast doubt on the potential to utilize collective knowledge for building a shared future.

The Emergence of LLaMA

Meta recently released LLaMA, a model architecturally similar to GPT, which has been trained on an exceptionally large number of tokens (over one trillion), surpassing the typical number used in models of equivalent size. LLaMA, available in sizes of 7B, 13B, 30B, and 65B parameters, performs at the level of much larger models given a specific parameter budget. This development has reinforced the idea that size is not the ultimate goal, and that operating highly capable LLMs on consumer devices is feasible, further hastening the rise of personal AI.

The open-source community has embraced LLaMA, creating numerous impressive features on top of it, ranging from highly efficient instruction-tuning to aggressive quantization. These features enable LLaMA to run on consumer devices with or without hardware acceleration. Smaller models allow more people to experiment with, build upon, and ultimately understand and control the technology.

LLaMA Licensing

Meta released LLaMA under two separate licenses. The weights were released under a custom, non-commercial license, which is highly relevant and will be discussed in an upcoming post.

On the other hand, the source code for the LLaMA model was released under the GPL license, the same license used for the Linux kernel. The GPL is a viral license, meaning that integrating all or part of GPL-licensed code into other projects requires these projects to be redistributed with a GPL license. Companies typically avoid integrating libraries licensed under viral licenses (self-contained software like the Linux kernel is a different case, as its source code is not integrated into one’s own projects), but the issue extends beyond the enterprise.

Experimentation often involves combining different elements, and a GPL project in a non-viral (Apache 2.0, BSD, MIT) ecosystem, such as the deep learning ecosystem, can lead to downstream effects like codebase contamination with incompatible licenses. This has already occurred with LLaMA and is hindering innovation.

Alternative implementations of LLaMA, such as those in the Hugging Face transformers library, are available under non-viral licenses. However, the transformers project is extensive, and navigating LLaMa, quantization, fine-tuning, and sparsity within Transformers can be challenging.

Openness and clarity are closely related.

Introducing Lit-LLaMA

With Lit-LLaMA, our goal is to provide a clean, fully open, non harmful implementation of the LLaMA source code that can be rapidly developed alongside the community. We began with Andrej Karpathy’s nanoGPT and have been building on it since.

The project has gained momentum, and we are now at a stage where the fundamentals have been addressed, allowing us to initiate experimentation.

Lit-LLaMA is not overly complicated, and that is precisely the point.

Keeping Simplicity Intact

To lead the next wave of AI, it is essential to harness collective knowledge and experimentation. To achieve this, simplicity must be maintained. LLaMA is a straightforward model, and both LoRA and LLaMA-Adapter (which enable parameter-efficient fine-tuning on consumer devices) are simple techniques. Everything can be contained within a single file.

With Lit-LLaMA, we aim for simplicity and a single-file, from-scratch implementation whenever possible, in order to deliver the best LLaMA implementation available. It is fully open-source and devoid of boilerplate code.

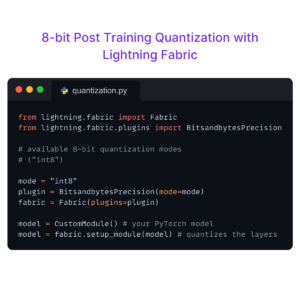

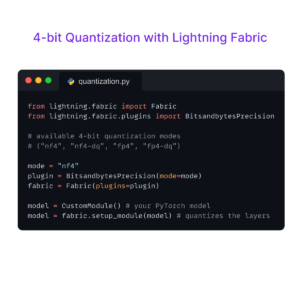

Implementing models and training them involves certain engineering aspects. Libraries like Lightning Fabric and PyTorch Lightning help to keep these complications at bay, allowing us to focus on what truly matters.

Where We Go From Here

Lit-LLaMA serves as an experimentation platform. Everyone is encouraged to use it, fork it, and contribute to it—we will be doing the same.

We will apply a similar approach to other models, such as GPT-J [SEE NOTE BELOW], which do not face licensing issues, always striving to provide the best, most optimized implementations for the community to build upon. Insights from Lit-LLaMA will be integrated into Lightning Fabric and PyTorch Lightning if it makes sense to do so.

In the coming weeks, several key innovations will be introduced to Lit-LLaMA, ranging from running on consumer devices to returning pre-training to the community. We will discuss these developments on our Discord, and you are welcome to join the conversation.

Acknowledgments

- @karpathy for nanoGPT

- @FacebookResearch for the original LLaMA implementation

- @TimDettmers for bitsandbytes

- @Microsoft for LoRA

A Note on GPT-J

GPT-J, a 6B parameter model, predates LLaMA by two full years. The EleutherAI collective trained it on a significant number of tokens (400B). The community is currently rediscovering GPT-J for its instruction-tuning capabilities and liberal licensing (its weights are available under the Apache 2.0 license). As a result, GPT-J serves as a less capable but fully open-source alternative to LLaMA.