LightningModule¶

A LightningModule organizes your PyTorch code into 5 sections

Computations (init).

Train loop (training_step)

Validation loop (validation_step)

Test loop (test_step)

Optimizers (configure_optimizers)

Notice a few things.

It’s the SAME code.

The PyTorch code IS NOT abstracted - just organized.

All the other code that’s not in the

LightningModulehas been automated for you by the trainer.

net = Net() trainer = Trainer() trainer.fit(net)

There are no .cuda() or .to() calls… Lightning does these for you.

# don't do in lightning x = torch.Tensor(2, 3) x = x.cuda() x = x.to(device) # do this instead x = x # leave it alone! # or to init a new tensor new_x = torch.Tensor(2, 3) new_x = new_x.type_as(x)

Lightning by default handles the distributed sampler for you.

# Don't do in Lightning... data = MNIST(...) sampler = DistributedSampler(data) DataLoader(data, sampler=sampler) # do this instead data = MNIST(...) DataLoader(data)

A

LightningModuleis atorch.nn.Modulebut with added functionality. Use it as such!

net = Net.load_from_checkpoint(PATH) net.freeze() out = net(x)

Thus, to use Lightning, you just need to organize your code which takes about 30 minutes, (and let’s be real, you probably should do anyway).

Minimal Example¶

Here are the only required methods.

import pytorch_lightning as pl

class LitModel(pl.LightningModule):

def __init__(self):

super().__init__()

self.l1 = nn.Linear(28 * 28, 10)

def forward(self, x):

return torch.relu(self.l1(x.view(x.size(0), -1)))

def training_step(self, batch, batch_idx):

x, y = batch

y_hat = self(x)

loss = F.cross_entropy(y_hat, y)

return loss

def configure_optimizers(self):

return torch.optim.Adam(self.parameters(), lr=0.02)

Which you can train by doing:

train_loader = DataLoader(MNIST(os.getcwd(), download=True, transform=transforms.ToTensor()))

trainer = pl.Trainer()

model = LitModel()

trainer.fit(model, train_loader)

The LightningModule has many convenience methods, but the core ones you need to know about are:

Name |

Description |

|---|---|

init |

Define computations here |

forward |

Use for inference only (separate from training_step) |

training_step |

the full training loop |

validation_step |

the full validation loop |

test_step |

the full test loop |

configure_optimizers |

define optimizers and LR schedulers |

Training¶

Training loop¶

To add a training loop use the training_step method

class LitClassifier(pl.LightningModule):

def __init__(self, model):

super().__init__()

self.model = model

def training_step(self, batch, batch_idx):

x, y = batch

y_hat = self.model(x)

loss = F.cross_entropy(y_hat, y)

return loss

Under the hood, Lightning does the following (pseudocode):

# put model in train mode

model.train()

torch.set_grad_enabled(True)

losses = []

for batch in train_dataloader:

# forward

loss = training_step(batch)

losses.append(loss.detach())

# clear gradients

optimizer.zero_grad()

# backward

loss.backward()

# update parameters

optimizer.step()

Training epoch-level metrics¶

If you want to calculate epoch-level metrics and log them, use the .log method

def training_step(self, batch, batch_idx):

x, y = batch

y_hat = self.model(x)

loss = F.cross_entropy(y_hat, y)

# logs metrics for each training_step,

# and the average across the epoch, to the progress bar and logger

self.log("train_loss", loss, on_step=True, on_epoch=True, prog_bar=True, logger=True)

return loss

The .log object automatically reduces the requested metrics across the full epoch. Here’s the pseudocode of what it does under the hood:

outs = []

for batch in train_dataloader:

# forward

out = training_step(val_batch)

outs.append(out)

# clear gradients

optimizer.zero_grad()

# backward

loss.backward()

# update parameters

optimizer.step()

epoch_metric = torch.mean(torch.stack([x["train_loss"] for x in outs]))

Train epoch-level operations¶

If you need to do something with all the outputs of each training_step, override training_epoch_end yourself.

def training_step(self, batch, batch_idx):

x, y = batch

y_hat = self.model(x)

loss = F.cross_entropy(y_hat, y)

preds = ...

return {"loss": loss, "other_stuff": preds}

def training_epoch_end(self, training_step_outputs):

for pred in training_step_outputs:

...

The matching pseudocode is:

outs = []

for batch in train_dataloader:

# forward

out = training_step(val_batch)

outs.append(out)

# clear gradients

optimizer.zero_grad()

# backward

loss.backward()

# update parameters

optimizer.step()

training_epoch_end(outs)

Training with DataParallel¶

When training using an accelerator that splits data from each batch across GPUs, sometimes you might need to aggregate them on the main GPU for processing (dp, or ddp2).

In this case, implement the training_step_end method

def training_step(self, batch, batch_idx):

x, y = batch

y_hat = self.model(x)

loss = F.cross_entropy(y_hat, y)

pred = ...

return {"loss": loss, "pred": pred}

def training_step_end(self, batch_parts):

# predictions from each GPU

predictions = batch_parts["pred"]

# losses from each GPU

losses = batch_parts["loss"]

gpu_0_prediction = predictions[0]

gpu_1_prediction = predictions[1]

# do something with both outputs

return (losses[0] + losses[1]) / 2

def training_epoch_end(self, training_step_outputs):

for out in training_step_outputs:

...

The full pseudocode that lighting does under the hood is:

outs = []

for train_batch in train_dataloader:

batches = split_batch(train_batch)

dp_outs = []

for sub_batch in batches:

# 1

dp_out = training_step(sub_batch)

dp_outs.append(dp_out)

# 2

out = training_step_end(dp_outs)

outs.append(out)

# do something with the outputs for all batches

# 3

training_epoch_end(outs)

Validation loop¶

To add a validation loop, override the validation_step method of the LightningModule:

class LitModel(pl.LightningModule):

def validation_step(self, batch, batch_idx):

x, y = batch

y_hat = self.model(x)

loss = F.cross_entropy(y_hat, y)

self.log("val_loss", loss)

Under the hood, Lightning does the following:

# ...

for batch in train_dataloader:

loss = model.training_step()

loss.backward()

# ...

if validate_at_some_point:

# disable grads + batchnorm + dropout

torch.set_grad_enabled(False)

model.eval()

# ----------------- VAL LOOP ---------------

for val_batch in model.val_dataloader:

val_out = model.validation_step(val_batch)

# ----------------- VAL LOOP ---------------

# enable grads + batchnorm + dropout

torch.set_grad_enabled(True)

model.train()

Validation epoch-level metrics¶

If you need to do something with all the outputs of each validation_step, override validation_epoch_end.

def validation_step(self, batch, batch_idx):

x, y = batch

y_hat = self.model(x)

loss = F.cross_entropy(y_hat, y)

pred = ...

return pred

def validation_epoch_end(self, validation_step_outputs):

for pred in validation_step_outputs:

...

Validating with DataParallel¶

When training using an accelerator that splits data from each batch across GPUs, sometimes you might need to aggregate them on the main GPU for processing (dp, or ddp2).

In this case, implement the validation_step_end method

def validation_step(self, batch, batch_idx):

x, y = batch

y_hat = self.model(x)

loss = F.cross_entropy(y_hat, y)

pred = ...

return {"loss": loss, "pred": pred}

def validation_step_end(self, batch_parts):

# predictions from each GPU

predictions = batch_parts["pred"]

# losses from each GPU

losses = batch_parts["loss"]

gpu_0_prediction = predictions[0]

gpu_1_prediction = predictions[1]

# do something with both outputs

return (losses[0] + losses[1]) / 2

def validation_epoch_end(self, validation_step_outputs):

for out in validation_step_outputs:

...

The full pseudocode that lighting does under the hood is:

outs = []

for batch in dataloader:

batches = split_batch(batch)

dp_outs = []

for sub_batch in batches:

# 1

dp_out = validation_step(sub_batch)

dp_outs.append(dp_out)

# 2

out = validation_step_end(dp_outs)

outs.append(out)

# do something with the outputs for all batches

# 3

validation_epoch_end(outs)

Test loop¶

The process for adding a test loop is the same as the process for adding a validation loop. Please refer to the section above for details.

The only difference is that the test loop is only called when .test() is used:

model = Model()

trainer = Trainer()

trainer.fit()

# automatically loads the best weights for you

trainer.test(model)

There are two ways to call test():

# call after training

trainer = Trainer()

trainer.fit(model)

# automatically auto-loads the best weights

trainer.test(dataloaders=test_dataloader)

# or call with pretrained model

model = MyLightningModule.load_from_checkpoint(PATH)

trainer = Trainer()

trainer.test(model, dataloaders=test_dataloader)

Inference¶

For research, LightningModules are best structured as systems.

import pytorch_lightning as pl

import torch

from torch import nn

class Autoencoder(pl.LightningModule):

def __init__(self, latent_dim=2):

super().__init__()

self.encoder = nn.Sequential(nn.Linear(28 * 28, 256), nn.ReLU(), nn.Linear(256, latent_dim))

self.decoder = nn.Sequential(nn.Linear(latent_dim, 256), nn.ReLU(), nn.Linear(256, 28 * 28))

def training_step(self, batch, batch_idx):

x, _ = batch

# encode

x = x.view(x.size(0), -1)

z = self.encoder(x)

# decode

recons = self.decoder(z)

# reconstruction

reconstruction_loss = nn.functional.mse_loss(recons, x)

return reconstruction_loss

def validation_step(self, batch, batch_idx):

x, _ = batch

x = x.view(x.size(0), -1)

z = self.encoder(x)

recons = self.decoder(z)

reconstruction_loss = nn.functional.mse_loss(recons, x)

self.log("val_reconstruction", reconstruction_loss)

def predict_step(self, batch, batch_idx, dataloader_idx):

x, _ = batch

# encode

# for predictions, we could return the embedding or the reconstruction or both based on our need.

x = x.view(x.size(0), -1)

return self.encoder(x)

def configure_optimizers(self):

return torch.optim.Adam(self.parameters(), lr=0.0002)

Which can be trained like this:

autoencoder = Autoencoder()

trainer = pl.Trainer(gpus=1)

trainer.fit(autoencoder, train_dataloader, val_dataloader)

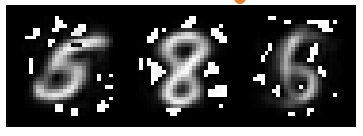

This simple model generates examples that look like this (the encoders and decoders are too weak)

The methods above are part of the lightning interface:

training_step

validation_step

test_step

predict_step

configure_optimizers

Note that in this case, the train loop and val loop are exactly the same. We can of course reuse this code.

class Autoencoder(pl.LightningModule):

def __init__(self, latent_dim=2):

super().__init__()

self.encoder = nn.Sequential(nn.Linear(28 * 28, 256), nn.ReLU(), nn.Linear(256, latent_dim))

self.decoder = nn.Sequential(nn.Linear(latent_dim, 256), nn.ReLU(), nn.Linear(256, 28 * 28))

def training_step(self, batch, batch_idx):

loss = self.shared_step(batch)

return loss

def validation_step(self, batch, batch_idx):

loss = self.shared_step(batch)

self.log("val_loss", loss)

def shared_step(self, batch):

x, _ = batch

# encode

x = x.view(x.size(0), -1)

z = self.encoder(x)

# decode

recons = self.decoder(z)

# loss

return nn.functional.mse_loss(recons, x)

def configure_optimizers(self):

return torch.optim.Adam(self.parameters(), lr=0.0002)

We create a new method called shared_step that all loops can use. This method name is arbitrary and NOT reserved.

Inference in research¶

In the case where we want to perform inference with the system we can add a forward method to the LightningModule.

Note

When using forward, you are responsible to call eval() and use the no_grad() context manager.

class Autoencoder(pl.LightningModule):

def forward(self, x):

return self.decoder(x)

model = Autoencoder()

model.eval()

with torch.no_grad():

reconstruction = model(embedding)

The advantage of adding a forward is that in complex systems, you can do a much more involved inference procedure, such as text generation:

class Seq2Seq(pl.LightningModule):

def forward(self, x):

embeddings = self(x)

hidden_states = self.encoder(embeddings)

for h in hidden_states:

# decode

...

return decoded

In the case where you want to scale your inference, you should be using

predict_step().

class Autoencoder(pl.LightningModule):

def forward(self, x):

return self.decoder(x)

def predict_step(self, batch, batch_idx, dataloader_idx=None):

# this calls forward

return self(batch)

data_module = ...

model = Autoencoder()

trainer = Trainer(gpus=2)

trainer.predict(model, data_module)

Inference in production¶

For cases like production, you might want to iterate different models inside a LightningModule.

import pytorch_lightning as pl

from pytorch_lightning.metrics import functional as FM

class ClassificationTask(pl.LightningModule):

def __init__(self, model):

super().__init__()

self.model = model

def training_step(self, batch, batch_idx):

x, y = batch

y_hat = self.model(x)

loss = F.cross_entropy(y_hat, y)

return loss

def validation_step(self, batch, batch_idx):

loss, acc = self._shared_eval_step(batch, batch_idx)

metrics = {"val_acc": acc, "val_loss": loss}

self.log_dict(metrics)

return metrics

def test_step(self, batch, batch_idx):

loss, acc = self._shared_eval_step(batch, batch_idx)

metrics = {"test_acc": acc, "test_loss": loss}

self.log_dict(metrics)

return metrics

def _shared_eval_step(self, batch, batch_idx):

x, y = batch

y_hat = self.model(x)

loss = F.cross_entropy(y_hat, y)

acc = FM.accuracy(y_hat, y)

return loss, acc

def predict_step(self, batch, batch_idx, dataloader_idx):

x, y = batch

y_hat = self.model(x)

def configure_optimizers(self):

return torch.optim.Adam(self.model.parameters(), lr=0.02)

Then pass in any arbitrary model to be fit with this task

for model in [resnet50(), vgg16(), BidirectionalRNN()]:

task = ClassificationTask(model)

trainer = Trainer(gpus=2)

trainer.fit(task, train_dataloader, val_dataloader)

Tasks can be arbitrarily complex such as implementing GAN training, self-supervised or even RL.

class GANTask(pl.LightningModule):

def __init__(self, generator, discriminator):

super().__init__()

self.generator = generator

self.discriminator = discriminator

...

When used like this, the model can be separated from the Task and thus used in production without needing to keep it in a LightningModule.

You can export to onnx.

Or trace using Jit.

or run in the python runtime.

task = ClassificationTask(model)

trainer = Trainer(gpus=2)

trainer.fit(task, train_dataloader, val_dataloader)

# use model after training or load weights and drop into the production system

model.eval()

y_hat = model(x)

LightningModule API¶

Methods¶

configure_callbacks¶

- LightningModule.configure_callbacks()[source]

Configure model-specific callbacks. When the model gets attached, e.g., when

.fit()or.test()gets called, the list returned here will be merged with the list of callbacks passed to the Trainer’scallbacksargument. If a callback returned here has the same type as one or several callbacks already present in the Trainer’s callbacks list, it will take priority and replace them. In addition, Lightning will make sureModelCheckpointcallbacks run last.- Returns

A list of callbacks which will extend the list of callbacks in the Trainer.

Example:

def configure_callbacks(self): early_stop = EarlyStopping(monitor="val_acc", mode="max") checkpoint = ModelCheckpoint(monitor="val_loss") return [early_stop, checkpoint]

Note

Certain callback methods like

on_init_start()will never be invoked on the new callbacks returned here.

configure_optimizers¶

- LightningModule.configure_optimizers()[source]

Choose what optimizers and learning-rate schedulers to use in your optimization. Normally you’d need one. But in the case of GANs or similar you might have multiple.

- Returns

Any of these 6 options.

Single optimizer.

List or Tuple of optimizers.

Two lists - The first list has multiple optimizers, and the second has multiple LR schedulers (or multiple

lr_scheduler_config).Dictionary, with an

"optimizer"key, and (optionally) a"lr_scheduler"key whose value is a single LR scheduler orlr_scheduler_config.Tuple of dictionaries as described above, with an optional

"frequency"key.None - Fit will run without any optimizer.

The

lr_scheduler_configis a dictionary which contains the scheduler and its associated configuration. The default configuration is shown below.lr_scheduler_config = { # REQUIRED: The scheduler instance "scheduler": lr_scheduler, # The unit of the scheduler's step size, could also be 'step'. # 'epoch' updates the scheduler on epoch end whereas 'step' # updates it after a optimizer update. "interval": "epoch", # How many epochs/steps should pass between calls to # `scheduler.step()`. 1 corresponds to updating the learning # rate after every epoch/step. "frequency": 1, # Metric to to monitor for schedulers like `ReduceLROnPlateau` "monitor": "val_loss", # If set to `True`, will enforce that the value specified 'monitor' # is available when the scheduler is updated, thus stopping # training if not found. If set to `False`, it will only produce a warning "strict": True, # If using the `LearningRateMonitor` callback to monitor the # learning rate progress, this keyword can be used to specify # a custom logged name "name": None, }

When there are schedulers in which the

.step()method is conditioned on a value, such as thetorch.optim.lr_scheduler.ReduceLROnPlateauscheduler, Lightning requires that thelr_scheduler_configcontains the keyword"monitor"set to the metric name that the scheduler should be conditioned on.# The ReduceLROnPlateau scheduler requires a monitor def configure_optimizers(self): optimizer = Adam(...) return { "optimizer": optimizer, "lr_scheduler": { "scheduler": ReduceLROnPlateau(optimizer, ...), "monitor": "metric_to_track", "frequency": "indicates how often the metric is updated" # If "monitor" references validation metrics, then "frequency" should be set to a # multiple of "trainer.check_val_every_n_epoch". }, } # In the case of two optimizers, only one using the ReduceLROnPlateau scheduler def configure_optimizers(self): optimizer1 = Adam(...) optimizer2 = SGD(...) scheduler1 = ReduceLROnPlateau(optimizer1, ...) scheduler2 = LambdaLR(optimizer2, ...) return ( { "optimizer": optimizer1, "lr_scheduler": { "scheduler": scheduler1, "monitor": "metric_to_track", }, }, {"optimizer": optimizer2, "lr_scheduler": scheduler2}, )

Metrics can be made available to monitor by simply logging it using

self.log('metric_to_track', metric_val)in yourLightningModule.Note

The

frequencyvalue specified in a dict along with theoptimizerkey is an int corresponding to the number of sequential batches optimized with the specific optimizer. It should be given to none or to all of the optimizers. There is a difference between passing multiple optimizers in a list, and passing multiple optimizers in dictionaries with a frequency of 1:In the former case, all optimizers will operate on the given batch in each optimization step.

In the latter, only one optimizer will operate on the given batch at every step.

This is different from the

frequencyvalue specified in thelr_scheduler_configmentioned above.def configure_optimizers(self): optimizer_one = torch.optim.SGD(self.model.parameters(), lr=0.01) optimizer_two = torch.optim.SGD(self.model.parameters(), lr=0.01) return [ {"optimizer": optimizer_one, "frequency": 5}, {"optimizer": optimizer_two, "frequency": 10}, ]

In this example, the first optimizer will be used for the first 5 steps, the second optimizer for the next 10 steps and that cycle will continue. If an LR scheduler is specified for an optimizer using the

lr_schedulerkey in the above dict, the scheduler will only be updated when its optimizer is being used.Examples:

# most cases. no learning rate scheduler def configure_optimizers(self): return Adam(self.parameters(), lr=1e-3) # multiple optimizer case (e.g.: GAN) def configure_optimizers(self): gen_opt = Adam(self.model_gen.parameters(), lr=0.01) dis_opt = Adam(self.model_dis.parameters(), lr=0.02) return gen_opt, dis_opt # example with learning rate schedulers def configure_optimizers(self): gen_opt = Adam(self.model_gen.parameters(), lr=0.01) dis_opt = Adam(self.model_dis.parameters(), lr=0.02) dis_sch = CosineAnnealing(dis_opt, T_max=10) return [gen_opt, dis_opt], [dis_sch] # example with step-based learning rate schedulers # each optimizer has its own scheduler def configure_optimizers(self): gen_opt = Adam(self.model_gen.parameters(), lr=0.01) dis_opt = Adam(self.model_dis.parameters(), lr=0.02) gen_sch = { 'scheduler': ExponentialLR(gen_opt, 0.99), 'interval': 'step' # called after each training step } dis_sch = CosineAnnealing(dis_opt, T_max=10) # called every epoch return [gen_opt, dis_opt], [gen_sch, dis_sch] # example with optimizer frequencies # see training procedure in `Improved Training of Wasserstein GANs`, Algorithm 1 # https://arxiv.org/abs/1704.00028 def configure_optimizers(self): gen_opt = Adam(self.model_gen.parameters(), lr=0.01) dis_opt = Adam(self.model_dis.parameters(), lr=0.02) n_critic = 5 return ( {'optimizer': dis_opt, 'frequency': n_critic}, {'optimizer': gen_opt, 'frequency': 1} )

Note

Some things to know:

Lightning calls

.backward()and.step()on each optimizer and learning rate scheduler as needed.If you use 16-bit precision (

precision=16), Lightning will automatically handle the optimizers.If you use multiple optimizers,

training_step()will have an additionaloptimizer_idxparameter.If you use

torch.optim.LBFGS, Lightning handles the closure function automatically for you.If you use multiple optimizers, gradients will be calculated only for the parameters of current optimizer at each training step.

If you need to control how often those optimizers step or override the default

.step()schedule, override theoptimizer_step()hook.

forward¶

- LightningModule.forward(*args, **kwargs)[source]

Same as

torch.nn.Module.forward().

freeze¶

log¶

- LightningModule.log(name, value, prog_bar=False, logger=True, on_step=None, on_epoch=None, reduce_fx='default', tbptt_reduce_fx=None, tbptt_pad_token=None, enable_graph=False, sync_dist=False, sync_dist_op=None, sync_dist_group=None, add_dataloader_idx=True, batch_size=None, metric_attribute=None, rank_zero_only=None)[source]

Log a key, value pair.

Example:

self.log('train_loss', loss)

The default behavior per hook is as follows:

*also applies to the test loop¶LightningModule Hook

on_step

on_epoch

prog_bar

logger

training_step

T

F

F

T

training_step_end

T

F

F

T

training_epoch_end

F

T

F

T

validation_step*

F

T

F

T

validation_step_end*

F

T

F

T

validation_epoch_end*

F

T

F

T

- Parameters

name¶ – key to log

value¶ – value to log. Can be a

float,Tensor,Metric, or a dictionary of the former.prog_bar¶ – if True logs to the progress bar

logger¶ – if True logs to the logger

on_step¶ – if True logs at this step. None auto-logs at the training_step but not validation/test_step

on_epoch¶ – if True logs epoch accumulated metrics. None auto-logs at the val/test step but not training_step

reduce_fx¶ – reduction function over step values for end of epoch.

torch.mean()by default.enable_graph¶ – if True, will not auto detach the graph

sync_dist¶ – if True, reduces the metric across GPUs/TPUs. Use with care as this may lead to a significant communication overhead.

sync_dist_group¶ – the ddp group to sync across

add_dataloader_idx¶ – if True, appends the index of the current dataloader to the name (when using multiple). If False, user needs to give unique names for each dataloader to not mix values

batch_size¶ – Current batch_size. This will be directly inferred from the loaded batch, but some data structures might need to explicitly provide it.

metric_attribute¶ – To restore the metric state, Lightning requires the reference of the

torchmetrics.Metricin your model. This is found automatically if it is a model attribute.rank_zero_only¶ – Whether the value will be logged only on rank 0. This will prevent synchronization which would produce a deadlock as not all processes would perform this log call.

log_dict¶

- LightningModule.log_dict(dictionary, prog_bar=False, logger=True, on_step=None, on_epoch=None, reduce_fx='default', tbptt_reduce_fx=None, tbptt_pad_token=None, enable_graph=False, sync_dist=False, sync_dist_op=None, sync_dist_group=None, add_dataloader_idx=True, batch_size=None, rank_zero_only=None)[source]

Log a dictionary of values at once.

Example:

values = {'loss': loss, 'acc': acc, ..., 'metric_n': metric_n} self.log_dict(values)

- Parameters

dictionary¶ (

Mapping[str,Union[Metric,Tensor,int,float,Mapping[str,Union[Metric,Tensor,int,float]]]]) – key value pairs. The values can be afloat,Tensor,Metric, or a dictionary of the former.on_step¶ (

Optional[bool]) – if True logs at this step. None auto-logs for training_step but not validation/test_stepon_epoch¶ (

Optional[bool]) – if True logs epoch accumulated metrics. None auto-logs for val/test step but not training_stepreduce_fx¶ (

Union[str,Callable]) – reduction function over step values for end of epoch.torch.mean()by default.enable_graph¶ (

bool) – if True, will not auto detach the graphsync_dist¶ (

bool) – if True, reduces the metric across GPUs/TPUs. Use with care as this may lead to a significant communication overhead.sync_dist_group¶ (

Optional[Any]) – the ddp group sync acrossadd_dataloader_idx¶ (

bool) – if True, appends the index of the current dataloader to the name (when using multiple). If False, user needs to give unique names for each dataloader to not mix valuesbatch_size¶ (

Optional[int]) – Current batch_size. This will be directly inferred from the loaded batch, but some data structures might need to explicitly provide it.rank_zero_only¶ (

Optional[bool]) – Whether the value will be logged only on rank 0. This will prevent synchronization which would produce a deadlock as not all processes would perform this log call.

- Return type

manual_backward¶

- LightningModule.manual_backward(loss, *args, **kwargs)[source]

Call this directly from your

training_step()when doing optimizations manually. By using this, Lightning can ensure that all the proper scaling gets applied when using mixed precision.See manual optimization for more examples.

Example:

def training_step(...): opt = self.optimizers() loss = ... opt.zero_grad() # automatically applies scaling, etc... self.manual_backward(loss) opt.step()

- Parameters

loss¶ (

Tensor) – The tensor on which to compute gradients. Must have a graph attached.*args¶ – Additional positional arguments to be forwarded to

backward()**kwargs¶ – Additional keyword arguments to be forwarded to

backward()

- Return type

print¶

- LightningModule.print(*args, **kwargs)[source]

Prints only from process 0. Use this in any distributed mode to log only once.

- Parameters

- Return type

Example:

def forward(self, x): self.print(x, 'in forward')

predict_step¶

- LightningModule.predict_step(batch, batch_idx, dataloader_idx=None)[source]

Step function called during

predict(). By default, it callsforward(). Override to add any processing logic.The

predict_step()is used to scale inference on multi-devices.To prevent an OOM error, it is possible to use

BasePredictionWritercallback to write the predictions to disk or database after each batch or on epoch end.The

BasePredictionWritershould be used while using a spawn based accelerator. This happens forTrainer(strategy="ddp_spawn")or training on 8 TPU cores withTrainer(tpu_cores=8)as predictions won’t be returned.Example

class MyModel(LightningModule): def predicts_step(self, batch, batch_idx, dataloader_idx): return self(batch) dm = ... model = MyModel() trainer = Trainer(gpus=2) predictions = trainer.predict(model, dm)

save_hyperparameters¶

- LightningModule.save_hyperparameters(*args, ignore=None, frame=None, logger=True)

Save arguments to

hparamsattribute.- Parameters

args¶ – single object of dict, NameSpace or OmegaConf or string names or arguments from class

__init__ignore¶ (

Union[Sequence[str],str,None]) – an argument name or a list of argument names from class__init__to be ignoredframe¶ (

Optional[FrameType]) – a frame object. Default is Nonelogger¶ (

bool) – Whether to send the hyperparameters to the logger. Default: True

- Return type

- Example::

>>> class ManuallyArgsModel(HyperparametersMixin): ... def __init__(self, arg1, arg2, arg3): ... super().__init__() ... # manually assign arguments ... self.save_hyperparameters('arg1', 'arg3') ... def forward(self, *args, **kwargs): ... ... >>> model = ManuallyArgsModel(1, 'abc', 3.14) >>> model.hparams "arg1": 1 "arg3": 3.14

>>> class AutomaticArgsModel(HyperparametersMixin): ... def __init__(self, arg1, arg2, arg3): ... super().__init__() ... # equivalent automatic ... self.save_hyperparameters() ... def forward(self, *args, **kwargs): ... ... >>> model = AutomaticArgsModel(1, 'abc', 3.14) >>> model.hparams "arg1": 1 "arg2": abc "arg3": 3.14

>>> class SingleArgModel(HyperparametersMixin): ... def __init__(self, params): ... super().__init__() ... # manually assign single argument ... self.save_hyperparameters(params) ... def forward(self, *args, **kwargs): ... ... >>> model = SingleArgModel(Namespace(p1=1, p2='abc', p3=3.14)) >>> model.hparams "p1": 1 "p2": abc "p3": 3.14

>>> class ManuallyArgsModel(HyperparametersMixin): ... def __init__(self, arg1, arg2, arg3): ... super().__init__() ... # pass argument(s) to ignore as a string or in a list ... self.save_hyperparameters(ignore='arg2') ... def forward(self, *args, **kwargs): ... ... >>> model = ManuallyArgsModel(1, 'abc', 3.14) >>> model.hparams "arg1": 1 "arg3": 3.14

test_step¶

- LightningModule.test_step(*args, **kwargs)[source]

Operates on a single batch of data from the test set. In this step you’d normally generate examples or calculate anything of interest such as accuracy.

# the pseudocode for these calls test_outs = [] for test_batch in test_data: out = test_step(test_batch) test_outs.append(out) test_epoch_end(test_outs)

- Parameters

- Return type

- Returns

Any of.

Any object or value

None- Testing will skip to the next batch

# if you have one test dataloader: def test_step(self, batch, batch_idx): ... # if you have multiple test dataloaders: def test_step(self, batch, batch_idx, dataloader_idx): ...

Examples:

# CASE 1: A single test dataset def test_step(self, batch, batch_idx): x, y = batch # implement your own out = self(x) loss = self.loss(out, y) # log 6 example images # or generated text... or whatever sample_imgs = x[:6] grid = torchvision.utils.make_grid(sample_imgs) self.logger.experiment.add_image('example_images', grid, 0) # calculate acc labels_hat = torch.argmax(out, dim=1) test_acc = torch.sum(y == labels_hat).item() / (len(y) * 1.0) # log the outputs! self.log_dict({'test_loss': loss, 'test_acc': test_acc})

If you pass in multiple test dataloaders,

test_step()will have an additional argument.# CASE 2: multiple test dataloaders def test_step(self, batch, batch_idx, dataloader_idx): # dataloader_idx tells you which dataset this is. ...

Note

If you don’t need to test you don’t need to implement this method.

Note

When the

test_step()is called, the model has been put in eval mode and PyTorch gradients have been disabled. At the end of the test epoch, the model goes back to training mode and gradients are enabled.

test_step_end¶

- LightningModule.test_step_end(*args, **kwargs)[source]

Use this when testing with dp or ddp2 because

test_step()will operate on only part of the batch. However, this is still optional and only needed for things like softmax or NCE loss.Note

If you later switch to ddp or some other mode, this will still be called so that you don’t have to change your code.

# pseudocode sub_batches = split_batches_for_dp(batch) batch_parts_outputs = [test_step(sub_batch) for sub_batch in sub_batches] test_step_end(batch_parts_outputs)

- Parameters

batch_parts_outputs¶ – What you return in

test_step()for each batch part.- Return type

- Returns

None or anything

# WITHOUT test_step_end # if used in DP or DDP2, this batch is 1/num_gpus large def test_step(self, batch, batch_idx): # batch is 1/num_gpus big x, y = batch out = self(x) loss = self.softmax(out) self.log("test_loss", loss) # -------------- # with test_step_end to do softmax over the full batch def test_step(self, batch, batch_idx): # batch is 1/num_gpus big x, y = batch out = self.encoder(x) return out def test_step_end(self, output_results): # this out is now the full size of the batch all_test_step_outs = output_results.out loss = nce_loss(all_test_step_outs) self.log("test_loss", loss)

See also

See the Multi-GPU training guide for more details.

test_epoch_end¶

- LightningModule.test_epoch_end(outputs)[source]

Called at the end of a test epoch with the output of all test steps.

# the pseudocode for these calls test_outs = [] for test_batch in test_data: out = test_step(test_batch) test_outs.append(out) test_epoch_end(test_outs)

- Parameters

outputs¶ (

List[Union[Tensor,Dict[str,Any]]]) – List of outputs you defined intest_step_end(), or if there are multiple dataloaders, a list containing a list of outputs for each dataloader- Return type

- Returns

None

Note

If you didn’t define a

test_step(), this won’t be called.Examples

With a single dataloader:

def test_epoch_end(self, outputs): # do something with the outputs of all test batches all_test_preds = test_step_outputs.predictions some_result = calc_all_results(all_test_preds) self.log(some_result)

With multiple dataloaders, outputs will be a list of lists. The outer list contains one entry per dataloader, while the inner list contains the individual outputs of each test step for that dataloader.

def test_epoch_end(self, outputs): final_value = 0 for dataloader_outputs in outputs: for test_step_out in dataloader_outputs: # do something final_value += test_step_out self.log("final_metric", final_value)

to_onnx¶

- LightningModule.to_onnx(file_path, input_sample=None, **kwargs)[source]

Saves the model in ONNX format.

- Parameters

Example

>>> class SimpleModel(LightningModule): ... def __init__(self): ... super().__init__() ... self.l1 = torch.nn.Linear(in_features=64, out_features=4) ... ... def forward(self, x): ... return torch.relu(self.l1(x.view(x.size(0), -1)))

>>> with tempfile.NamedTemporaryFile(suffix='.onnx', delete=False) as tmpfile: ... model = SimpleModel() ... input_sample = torch.randn((1, 64)) ... model.to_onnx(tmpfile.name, input_sample, export_params=True) ... os.path.isfile(tmpfile.name) True

to_torchscript¶

- LightningModule.to_torchscript(file_path=None, method='script', example_inputs=None, **kwargs)[source]

By default compiles the whole model to a

ScriptModule. If you want to use tracing, please provided the argumentmethod='trace'and make sure that either the example_inputs argument is provided, or the model hasexample_input_arrayset. If you would like to customize the modules that are scripted you should override this method. In case you want to return multiple modules, we recommend using a dictionary.- Parameters

file_path¶ (

Union[str,Path,None]) – Path where to save the torchscript. Default: None (no file saved).method¶ (

Optional[str]) – Whether to use TorchScript’s script or trace method. Default: ‘script’example_inputs¶ (

Optional[Any]) – An input to be used to do tracing when method is set to ‘trace’. Default: None (usesexample_input_array)**kwargs¶ – Additional arguments that will be passed to the

torch.jit.script()ortorch.jit.trace()function.

Note

Example

>>> class SimpleModel(LightningModule): ... def __init__(self): ... super().__init__() ... self.l1 = torch.nn.Linear(in_features=64, out_features=4) ... ... def forward(self, x): ... return torch.relu(self.l1(x.view(x.size(0), -1))) ... >>> model = SimpleModel() >>> torch.jit.save(model.to_torchscript(), "model.pt") >>> os.path.isfile("model.pt") >>> torch.jit.save(model.to_torchscript(file_path="model_trace.pt", method='trace', ... example_inputs=torch.randn(1, 64))) >>> os.path.isfile("model_trace.pt") True

training_step¶

- LightningModule.training_step(*args, **kwargs)[source]

Here you compute and return the training loss and some additional metrics for e.g. the progress bar or logger.

- Parameters

batch¶ (

Tensor| (Tensor, …) | [Tensor, …]) – The output of yourDataLoader. A tensor, tuple or list.batch_idx¶ (

int) – Integer displaying index of this batchoptimizer_idx¶ (

int) – When using multiple optimizers, this argument will also be present.hiddens¶ (

Any) – Passed in iftruncated_bptt_steps> 0.

- Return type

- Returns

Any of.

Tensor- The loss tensordict- A dictionary. Can include any keys, but must include the key'loss'None- Training will skip to the next batch. This is only for automatic optimization.This is not supported for multi-GPU, TPU, IPU, or DeepSpeed.

In this step you’d normally do the forward pass and calculate the loss for a batch. You can also do fancier things like multiple forward passes or something model specific.

Example:

def training_step(self, batch, batch_idx): x, y, z = batch out = self.encoder(x) loss = self.loss(out, x) return loss

If you define multiple optimizers, this step will be called with an additional

optimizer_idxparameter.# Multiple optimizers (e.g.: GANs) def training_step(self, batch, batch_idx, optimizer_idx): if optimizer_idx == 0: # do training_step with encoder ... if optimizer_idx == 1: # do training_step with decoder ...

If you add truncated back propagation through time you will also get an additional argument with the hidden states of the previous step.

# Truncated back-propagation through time def training_step(self, batch, batch_idx, hiddens): # hiddens are the hidden states from the previous truncated backprop step out, hiddens = self.lstm(data, hiddens) loss = ... return {"loss": loss, "hiddens": hiddens}

Note

The loss value shown in the progress bar is smoothed (averaged) over the last values, so it differs from the actual loss returned in train/validation step.

training_step_end¶

- LightningModule.training_step_end(*args, **kwargs)[source]

Use this when training with dp or ddp2 because

training_step()will operate on only part of the batch. However, this is still optional and only needed for things like softmax or NCE loss.Note

If you later switch to ddp or some other mode, this will still be called so that you don’t have to change your code

# pseudocode sub_batches = split_batches_for_dp(batch) batch_parts_outputs = [training_step(sub_batch) for sub_batch in sub_batches] training_step_end(batch_parts_outputs)

- Parameters

batch_parts_outputs¶ – What you return in training_step for each batch part.

- Return type

- Returns

Anything

When using dp/ddp2 distributed backends, only a portion of the batch is inside the training_step:

def training_step(self, batch, batch_idx): # batch is 1/num_gpus big x, y = batch out = self(x) # softmax uses only a portion of the batch in the denominator loss = self.softmax(out) loss = nce_loss(loss) return loss

If you wish to do something with all the parts of the batch, then use this method to do it:

def training_step(self, batch, batch_idx): # batch is 1/num_gpus big x, y = batch out = self.encoder(x) return {"pred": out} def training_step_end(self, training_step_outputs): gpu_0_pred = training_step_outputs[0]["pred"] gpu_1_pred = training_step_outputs[1]["pred"] gpu_n_pred = training_step_outputs[n]["pred"] # this softmax now uses the full batch loss = nce_loss([gpu_0_pred, gpu_1_pred, gpu_n_pred]) return loss

See also

See the Multi-GPU training guide for more details.

training_epoch_end¶

- LightningModule.training_epoch_end(outputs)[source]

Called at the end of the training epoch with the outputs of all training steps. Use this in case you need to do something with all the outputs returned by

training_step().# the pseudocode for these calls train_outs = [] for train_batch in train_data: out = training_step(train_batch) train_outs.append(out) training_epoch_end(train_outs)

- Parameters

outputs¶ (

List[Union[Tensor,Dict[str,Any]]]) – List of outputs you defined intraining_step(). If there are multiple optimizers, it is a list containing a list of outputs for each optimizer. If usingtruncated_bptt_steps > 1, each element is a list of outputs corresponding to the outputs of each processed split batch.- Return type

- Returns

None

Note

If this method is not overridden, this won’t be called.

def training_epoch_end(self, training_step_outputs): # do something with all training_step outputs for out in training_step_outputs: ...

unfreeze¶

validation_step¶

- LightningModule.validation_step(*args, **kwargs)[source]

Operates on a single batch of data from the validation set. In this step you’d might generate examples or calculate anything of interest like accuracy.

# the pseudocode for these calls val_outs = [] for val_batch in val_data: out = validation_step(val_batch) val_outs.append(out) validation_epoch_end(val_outs)

- Parameters

- Return type

- Returns

Any object or value

None- Validation will skip to the next batch

# pseudocode of order val_outs = [] for val_batch in val_data: out = validation_step(val_batch) if defined("validation_step_end"): out = validation_step_end(out) val_outs.append(out) val_outs = validation_epoch_end(val_outs)

# if you have one val dataloader: def validation_step(self, batch, batch_idx): ... # if you have multiple val dataloaders: def validation_step(self, batch, batch_idx, dataloader_idx): ...

Examples:

# CASE 1: A single validation dataset def validation_step(self, batch, batch_idx): x, y = batch # implement your own out = self(x) loss = self.loss(out, y) # log 6 example images # or generated text... or whatever sample_imgs = x[:6] grid = torchvision.utils.make_grid(sample_imgs) self.logger.experiment.add_image('example_images', grid, 0) # calculate acc labels_hat = torch.argmax(out, dim=1) val_acc = torch.sum(y == labels_hat).item() / (len(y) * 1.0) # log the outputs! self.log_dict({'val_loss': loss, 'val_acc': val_acc})

If you pass in multiple val dataloaders,

validation_step()will have an additional argument.# CASE 2: multiple validation dataloaders def validation_step(self, batch, batch_idx, dataloader_idx): # dataloader_idx tells you which dataset this is. ...

Note

If you don’t need to validate you don’t need to implement this method.

Note

When the

validation_step()is called, the model has been put in eval mode and PyTorch gradients have been disabled. At the end of validation, the model goes back to training mode and gradients are enabled.

validation_step_end¶

- LightningModule.validation_step_end(*args, **kwargs)[source]

Use this when validating with dp or ddp2 because

validation_step()will operate on only part of the batch. However, this is still optional and only needed for things like softmax or NCE loss.Note

If you later switch to ddp or some other mode, this will still be called so that you don’t have to change your code.

# pseudocode sub_batches = split_batches_for_dp(batch) batch_parts_outputs = [validation_step(sub_batch) for sub_batch in sub_batches] validation_step_end(batch_parts_outputs)

- Parameters

batch_parts_outputs¶ – What you return in

validation_step()for each batch part.- Return type

- Returns

None or anything

# WITHOUT validation_step_end # if used in DP or DDP2, this batch is 1/num_gpus large def validation_step(self, batch, batch_idx): # batch is 1/num_gpus big x, y = batch out = self.encoder(x) loss = self.softmax(out) loss = nce_loss(loss) self.log("val_loss", loss) # -------------- # with validation_step_end to do softmax over the full batch def validation_step(self, batch, batch_idx): # batch is 1/num_gpus big x, y = batch out = self(x) return out def validation_step_end(self, val_step_outputs): for out in val_step_outputs: ...

See also

See the Multi-GPU training guide for more details.

validation_epoch_end¶

- LightningModule.validation_epoch_end(outputs)[source]

Called at the end of the validation epoch with the outputs of all validation steps.

# the pseudocode for these calls val_outs = [] for val_batch in val_data: out = validation_step(val_batch) val_outs.append(out) validation_epoch_end(val_outs)

- Parameters

outputs¶ (

List[Union[Tensor,Dict[str,Any]]]) – List of outputs you defined invalidation_step(), or if there are multiple dataloaders, a list containing a list of outputs for each dataloader.- Return type

- Returns

None

Note

If you didn’t define a

validation_step(), this won’t be called.Examples

With a single dataloader:

def validation_epoch_end(self, val_step_outputs): for out in val_step_outputs: ...

With multiple dataloaders, outputs will be a list of lists. The outer list contains one entry per dataloader, while the inner list contains the individual outputs of each validation step for that dataloader.

def validation_epoch_end(self, outputs): for dataloader_output_result in outputs: dataloader_outs = dataloader_output_result.dataloader_i_outputs self.log("final_metric", final_value)

Properties¶

These are properties available in a LightningModule.

current_epoch¶

The current epoch

def training_step(self):

if self.current_epoch == 0:

...

device¶

The device the module is on. Use it to keep your code device agnostic

def training_step(self):

z = torch.rand(2, 3, device=self.device)

global_rank¶

The global_rank of this LightningModule. Lightning saves logs, weights etc only from global_rank = 0. You normally do not need to use this property

Global rank refers to the index of that GPU across ALL GPUs. For example, if using 10 machines, each with 4 GPUs, the 4th GPU on the 10th machine has global_rank = 39

global_step¶

The current step (does not reset each epoch)

def training_step(self):

self.logger.experiment.log_image(..., step=self.global_step)

hparams¶

- The arguments saved by calling

save_hyperparameterspassed through__init__() could be accessed by the

hparamsattribute.

def __init__(self, learning_rate):

self.save_hyperparameters()

def configure_optimizers(self):

return Adam(self.parameters(), lr=self.hparams.learning_rate)

logger¶

The current logger being used (tensorboard or other supported logger)

def training_step(self):

# the generic logger (same no matter if tensorboard or other supported logger)

self.logger

# the particular logger

tensorboard_logger = self.logger.experiment

local_rank¶

The local_rank of this LightningModule. Lightning saves logs, weights etc only from global_rank = 0. You normally do not need to use this property

Local rank refers to the rank on that machine. For example, if using 10 machines, the GPU at index 0 on each machine has local_rank = 0.

precision¶

The type of precision used:

def training_step(self):

if self.precision == 16:

...

trainer¶

Pointer to the trainer

def training_step(self):

max_steps = self.trainer.max_steps

any_flag = self.trainer.any_flag

use_amp¶

True if using Automatic Mixed Precision (AMP)

automatic_optimization¶

When set to False, Lightning does not automate the optimization process. This means you are responsible for handling

your optimizers. However, we do take care of precision and any accelerators used.

See manual optimization for details.

def __init__(self):

self.automatic_optimization = False

def training_step(self, batch, batch_idx):

opt = self.optimizers(use_pl_optimizer=True)

loss = ...

opt.zero_grad()

self.manual_backward(loss)

opt.step()

This is recommended only if using 2+ optimizers AND if you know how to perform the optimization procedure properly. Note

that automatic optimization can still be used with multiple optimizers by relying on the optimizer_idx parameter.

Manual optimization is most useful for research topics like reinforcement learning, sparse coding, and GAN research.

def __init__(self):

self.automatic_optimization = False

def training_step(self, batch, batch_idx):

# access your optimizers with use_pl_optimizer=False. Default is True

opt_a, opt_b = self.optimizers(use_pl_optimizer=True)

gen_loss = ...

opt_a.zero_grad()

self.manual_backward(gen_loss)

opt_a.step()

disc_loss = ...

opt_b.zero_grad()

self.manual_backward(disc_loss)

opt_b.step()

example_input_array¶

Set and access example_input_array which is basically a single batch.

def __init__(self):

self.example_input_array = ...

self.generator = ...

def on_train_epoch_end(self):

# generate some images using the example_input_array

gen_images = self.generator(self.example_input_array)

datamodule¶

Set or access your datamodule.

def configure_optimizers(self):

num_training_samples = len(self.trainer.datamodule.train_dataloader())

...

model_size¶

Get the model file size (in megabytes) using self.model_size inside LightningModule.

truncated_bptt_steps¶

Truncated back prop breaks performs backprop every k steps of

a much longer sequence. This is made possible by passing training batches

splitted along the time-dimensions into splits of size k to the

training_step. In order to keep the same forward propagation behavior, all

hidden states should be kept in-between each time-dimension split.

If this is enabled, your batches will automatically get truncated and the trainer will apply Truncated Backprop to it.

from pytorch_lightning import LightningModule

class MyModel(LightningModule):

def __init__(self, input_size, hidden_size, num_layers):

super().__init__()

# batch_first has to be set to True

self.lstm = nn.LSTM(

input_size=input_size,

hidden_size=hidden_size,

num_layers=num_layers,

batch_first=True,

)

...

# Important: This property activates truncated backpropagation through time

# Setting this value to 2 splits the batch into sequences of size 2

self.truncated_bptt_steps = 2

# Truncated back-propagation through time

def training_step(self, batch, batch_idx, hiddens):

x, y = batch

# the training step must be updated to accept a ``hiddens`` argument

# hiddens are the hiddens from the previous truncated backprop step

out, hiddens = self.lstm(x, hiddens)

...

return {"loss": ..., "hiddens": hiddens}

Lightning takes care of splitting your batch along the time-dimension. It is

assumed to be the second dimension of your batches. Therefore, in the

example above we have set batch_first=True.

# we use the second as the time dimension

# (batch, time, ...)

sub_batch = batch[0, 0:t, ...]

To modify how the batch is split,

override pytorch_lightning.core.LightningModule.tbptt_split_batch():

class LitMNIST(LightningModule):

def tbptt_split_batch(self, batch, split_size):

# do your own splitting on the batch

return splits

Hooks¶

This is the pseudocode to describe the structure of fit().

The inputs and outputs of each function are not represented for simplicity. Please check each function’s API reference

for more information.

def fit(self):

if global_rank == 0:

# prepare data is called on GLOBAL_ZERO only

prepare_data()

configure_callbacks()

with parallel(devices):

# devices can be GPUs, TPUs, ...

train_on_device(model)

def train_on_device(model):

# called PER DEVICE

on_fit_start()

setup("fit")

configure_optimizers()

on_pretrain_routine_start()

on_pretrain_routine_end()

# the sanity check runs here

on_train_start()

for epoch in epochs:

train_loop()

on_train_end()

on_fit_end()

teardown("fit")

def train_loop():

on_epoch_start()

on_train_epoch_start()

for batch in train_dataloader():

on_train_batch_start()

on_before_batch_transfer()

transfer_batch_to_device()

on_after_batch_transfer()

training_step()

on_before_zero_grad()

optimizer_zero_grad()

on_before_backward()

backward()

on_after_backward()

on_before_optimizer_step()

configure_gradient_clipping()

optimizer_step()

on_train_batch_end()

if should_check_val:

val_loop()

# end training epoch

training_epoch_end()

on_train_epoch_end()

on_epoch_end()

def val_loop():

on_validation_model_eval() # calls `model.eval()`

torch.set_grad_enabled(False)

on_validation_start()

on_epoch_start()

on_validation_epoch_start()

for batch in val_dataloader():

on_validation_batch_start()

on_before_batch_transfer()

transfer_batch_to_device()

on_after_batch_transfer()

validation_step()

on_validation_batch_end()

validation_epoch_end()

on_validation_epoch_end()

on_epoch_end()

on_validation_end()

# set up for train

on_validation_model_train() # calls `model.train()`

torch.set_grad_enabled(True)

backward¶

- LightningModule.backward(loss, optimizer, optimizer_idx, *args, **kwargs)[source]

Called to perform backward on the loss returned in

training_step(). Override this hook with your own implementation if you need to.- Parameters

loss¶ (

Tensor) – The loss tensor returned bytraining_step(). If gradient accumulation is used, the loss here holds the normalized value (scaled by 1 / accumulation steps).optimizer¶ (

Optional[Optimizer]) – Current optimizer being used.Noneif using manual optimization.optimizer_idx¶ (

Optional[int]) – Index of the current optimizer being used.Noneif using manual optimization.

- Return type

Example:

def backward(self, loss, optimizer, optimizer_idx): loss.backward()

on_before_backward¶

on_after_backward¶

on_before_zero_grad¶

- ModelHooks.on_before_zero_grad(optimizer)[source]

Called after

training_step()and beforeoptimizer.zero_grad().Called in the training loop after taking an optimizer step and before zeroing grads. Good place to inspect weight information with weights updated.

This is where it is called:

for optimizer in optimizers: out = training_step(...) model.on_before_zero_grad(optimizer) # < ---- called here optimizer.zero_grad() backward()

on_fit_start¶

on_fit_end¶

on_load_checkpoint¶

- CheckpointHooks.on_load_checkpoint(checkpoint)[source]

Called by Lightning to restore your model. If you saved something with

on_save_checkpoint()this is your chance to restore this.Example:

def on_load_checkpoint(self, checkpoint): # 99% of the time you don't need to implement this method self.something_cool_i_want_to_save = checkpoint['something_cool_i_want_to_save']

Note

Lightning auto-restores global step, epoch, and train state including amp scaling. There is no need for you to restore anything regarding training.

on_save_checkpoint¶

- CheckpointHooks.on_save_checkpoint(checkpoint)[source]

Called by Lightning when saving a checkpoint to give you a chance to store anything else you might want to save.

- Parameters

checkpoint¶ (

Dict[str,Any]) – The full checkpoint dictionary before it gets dumped to a file. Implementations of this hook can insert additional data into this dictionary.- Return type

Example:

def on_save_checkpoint(self, checkpoint): # 99% of use cases you don't need to implement this method checkpoint['something_cool_i_want_to_save'] = my_cool_pickable_object

Note

Lightning saves all aspects of training (epoch, global step, etc…) including amp scaling. There is no need for you to store anything about training.

on_train_start¶

on_train_end¶

on_validation_start¶

on_validation_end¶

on_pretrain_routine_start¶

on_pretrain_routine_end¶

on_test_batch_start¶

- ModelHooks.on_test_batch_start(batch, batch_idx, dataloader_idx)[source]

Called in the test loop before anything happens for that batch.

on_test_batch_end¶

- ModelHooks.on_test_batch_end(outputs, batch, batch_idx, dataloader_idx)[source]

Called in the test loop after the batch.

on_test_epoch_start¶

on_test_epoch_end¶

on_test_start¶

on_test_end¶

on_train_batch_start¶

- ModelHooks.on_train_batch_start(batch, batch_idx, unused=0)[source]

Called in the training loop before anything happens for that batch.

If you return -1 here, you will skip training for the rest of the current epoch.

on_train_batch_end¶

- ModelHooks.on_train_batch_end(outputs, batch, batch_idx, unused=0)[source]

Called in the training loop after the batch.

- Parameters

- Return type

on_epoch_start¶

on_epoch_end¶

on_train_epoch_start¶

on_train_epoch_end¶

- ModelHooks.on_train_epoch_end()[source]

Called in the training loop at the very end of the epoch.

To access all batch outputs at the end of the epoch, either: :rtype:

NoneImplement training_epoch_end in the LightningModule OR

Cache data across steps on the attribute(s) of the LightningModule and access them in this hook

on_validation_batch_start¶

- ModelHooks.on_validation_batch_start(batch, batch_idx, dataloader_idx)[source]

Called in the validation loop before anything happens for that batch.

on_validation_batch_end¶

- ModelHooks.on_validation_batch_end(outputs, batch, batch_idx, dataloader_idx)[source]

Called in the validation loop after the batch.

- Parameters

- Return type

on_validation_epoch_start¶

on_validation_epoch_end¶

on_post_move_to_device¶

- ModelHooks.on_post_move_to_device()[source]

Called in the

parameter_validationdecorator afterto()is called. This is a good place to tie weights between modules after moving them to a device. Can be used when training models with weight sharing properties on TPU.Addresses the handling of shared weights on TPU: https://github.com/pytorch/xla/blob/master/TROUBLESHOOTING.md#xla-tensor-quirks

Example:

def on_post_move_to_device(self): self.decoder.weight = self.encoder.weight

- Return type

on_validation_model_eval¶

on_validation_model_train¶

on_test_model_eval¶

on_test_model_train¶

on_before_optimizer_step¶

- ModelHooks.on_before_optimizer_step(optimizer, optimizer_idx)[source]

Called before

optimizer.step().The hook is only called if gradients do not need to be accumulated. See:

accumulate_grad_batches.If using native AMP, the loss will be unscaled before calling this hook. See these docs for more information on the scaling of gradients.

If clipping gradients, the gradients will not have been clipped yet.

- Parameters

- Return type

Example:

def on_before_optimizer_step(self, optimizer, optimizer_idx): # example to inspect gradient information in tensorboard if self.trainer.global_step % 25 == 0: # don't make the tf file huge for k, v in self.named_parameters(): self.logger.experiment.add_histogram( tag=k, values=v.grad, global_step=self.trainer.global_step )

configure_gradient_clipping¶

- LightningModule.configure_gradient_clipping(optimizer, optimizer_idx, gradient_clip_val=None, gradient_clip_algorithm=None)[source]

Perform gradient clipping for the optimizer parameters. Called before

optimizer_step().- Parameters

optimizer_idx¶ (

int) – Index of the current optimizer being used.gradient_clip_val¶ (

Union[int,float,None]) – The value at which to clip gradients. By default value passed in Trainer will be available here.gradient_clip_algorithm¶ (

Optional[str]) – The gradient clipping algorithm to use. By default value passed in Trainer will be available here.

Example:

# Perform gradient clipping on gradients associated with discriminator (optimizer_idx=1) in GAN def configure_gradient_clipping(self, optimizer, optimizer_idx, gradient_clip_val, gradient_clip_algorithm): if optimizer_idx == 1: # Lightning will handle the gradient clipping self.clip_gradients( optimizer, gradient_clip_val=gradient_clip_val, gradient_clip_algorithm=gradient_clip_algorithm ) else: # implement your own custom logic to clip gradients for generator (optimizer_idx=0)

optimizer_step¶

- LightningModule.optimizer_step(epoch, batch_idx, optimizer, optimizer_idx=0, optimizer_closure=None, on_tpu=False, using_native_amp=False, using_lbfgs=False)[source]

Override this method to adjust the default way the

Trainercalls each optimizer. By default, Lightning callsstep()andzero_grad()as shown in the example once per optimizer. This method (andzero_grad()) won’t be called during the accumulation phase whenTrainer(accumulate_grad_batches != 1).- Parameters

optimizer¶ (

Union[Optimizer,LightningOptimizer]) – A PyTorch optimizeroptimizer_idx¶ (

int) – If you used multiple optimizers, this indexes into that list.optimizer_closure¶ (

Optional[Callable[[],Any]]) – Closure for all optimizers. This closure must be executed as it includes the calls totraining_step(),optimizer.zero_grad(), andbackward().using_lbfgs¶ (

bool) – True if the matching optimizer istorch.optim.LBFGS

- Return type

Examples:

# DEFAULT def optimizer_step(self, epoch, batch_idx, optimizer, optimizer_idx, optimizer_closure, on_tpu, using_native_amp, using_lbfgs): optimizer.step(closure=optimizer_closure) # Alternating schedule for optimizer steps (i.e.: GANs) def optimizer_step(self, epoch, batch_idx, optimizer, optimizer_idx, optimizer_closure, on_tpu, using_native_amp, using_lbfgs): # update generator opt every step if optimizer_idx == 0: optimizer.step(closure=optimizer_closure) # update discriminator opt every 2 steps if optimizer_idx == 1: if (batch_idx + 1) % 2 == 0 : optimizer.step(closure=optimizer_closure) else: # call the closure by itself to run `training_step` + `backward` without an optimizer step optimizer_closure() # ... # add as many optimizers as you want

Here’s another example showing how to use this for more advanced things such as learning rate warm-up:

# learning rate warm-up def optimizer_step( self, epoch, batch_idx, optimizer, optimizer_idx, optimizer_closure, on_tpu, using_native_amp, using_lbfgs, ): # warm up lr if self.trainer.global_step < 500: lr_scale = min(1.0, float(self.trainer.global_step + 1) / 500.0) for pg in optimizer.param_groups: pg["lr"] = lr_scale * self.learning_rate # update params optimizer.step(closure=optimizer_closure)

optimizer_zero_grad¶

- LightningModule.optimizer_zero_grad(epoch, batch_idx, optimizer, optimizer_idx)[source]

Override this method to change the default behaviour of

optimizer.zero_grad().- Parameters

Examples:

# DEFAULT def optimizer_zero_grad(self, epoch, batch_idx, optimizer, optimizer_idx): optimizer.zero_grad() # Set gradients to `None` instead of zero to improve performance. def optimizer_zero_grad(self, epoch, batch_idx, optimizer, optimizer_idx): optimizer.zero_grad(set_to_none=True)

See

torch.optim.Optimizer.zero_grad()for the explanation of the above example.

prepare_data¶

- LightningModule.prepare_data()

Use this to download and prepare data. :rtype:

NoneWarning

DO NOT set state to the model (use setup instead) since this is NOT called on every GPU in DDP/TPU

Example:

def prepare_data(self): # good download_data() tokenize() etc() # bad self.split = data_split self.some_state = some_other_state()

In DDP prepare_data can be called in two ways (using Trainer(prepare_data_per_node)):

Once per node. This is the default and is only called on LOCAL_RANK=0.

Once in total. Only called on GLOBAL_RANK=0.

Example:

# DEFAULT # called once per node on LOCAL_RANK=0 of that node Trainer(prepare_data_per_node=True) # call on GLOBAL_RANK=0 (great for shared file systems) Trainer(prepare_data_per_node=False)

Note

Setting

prepare_data_per_nodewith the trainer flag is deprecated and will be removed in v1.7.0. Please setprepare_data_per_nodein LightningDataModule or LightningModule directly instead.This is called before requesting the dataloaders:

model.prepare_data() initialize_distributed() model.setup(stage) model.train_dataloader() model.val_dataloader() model.test_dataloader()

setup¶

- DataHooks.setup(stage=None)[source]

Called at the beginning of fit (train + validate), validate, test, and predict. This is a good hook when you need to build models dynamically or adjust something about them. This hook is called on every process when using DDP.

Example:

class LitModel(...): def __init__(self): self.l1 = None def prepare_data(self): download_data() tokenize() # don't do this self.something = else def setup(stage): data = Load_data(...) self.l1 = nn.Linear(28, data.num_classes)

tbptt_split_batch¶

- LightningModule.tbptt_split_batch(batch, split_size)[source]

When using truncated backpropagation through time, each batch must be split along the time dimension. Lightning handles this by default, but for custom behavior override this function.

- Parameters

- Return type

- Returns

List of batch splits. Each split will be passed to

training_step()to enable truncated back propagation through time. The default implementation splits root level Tensors and Sequences at dim=1 (i.e. time dim). It assumes that each time dim is the same length.

Examples:

def tbptt_split_batch(self, batch, split_size): splits = [] for t in range(0, time_dims[0], split_size): batch_split = [] for i, x in enumerate(batch): if isinstance(x, torch.Tensor): split_x = x[:, t:t + split_size] elif isinstance(x, collections.Sequence): split_x = [None] * len(x) for batch_idx in range(len(x)): split_x[batch_idx] = x[batch_idx][t:t + split_size] batch_split.append(split_x) splits.append(batch_split) return splits

Note

Called in the training loop after

on_batch_start()iftruncated_bptt_steps> 0. Each returned batch split is passed separately totraining_step().

teardown¶

train_dataloader¶

- DataHooks.train_dataloader()[source]

Implement one or more PyTorch DataLoaders for training.

- Return type

Union[DataLoader,Sequence[DataLoader],Sequence[Sequence[DataLoader]],Sequence[Dict[str,DataLoader]],Dict[str,DataLoader],Dict[str,Dict[str,DataLoader]],Dict[str,Sequence[DataLoader]]]- Returns

A collection of

torch.utils.data.DataLoaderspecifying training samples. In the case of multiple dataloaders, please see this page.

The dataloader you return will not be reloaded unless you set

reload_dataloaders_every_n_epochsto a positive integer.For data processing use the following pattern:

download in

prepare_data()process and split in

setup()

However, the above are only necessary for distributed processing.

Warning

do not assign state in prepare_data

fit()…

Note

Lightning adds the correct sampler for distributed and arbitrary hardware. There is no need to set it yourself.

Example:

# single dataloader def train_dataloader(self): transform = transforms.Compose([transforms.ToTensor(), transforms.Normalize((0.5,), (1.0,))]) dataset = MNIST(root='/path/to/mnist/', train=True, transform=transform, download=True) loader = torch.utils.data.DataLoader( dataset=dataset, batch_size=self.batch_size, shuffle=True ) return loader # multiple dataloaders, return as list def train_dataloader(self): mnist = MNIST(...) cifar = CIFAR(...) mnist_loader = torch.utils.data.DataLoader( dataset=mnist, batch_size=self.batch_size, shuffle=True ) cifar_loader = torch.utils.data.DataLoader( dataset=cifar, batch_size=self.batch_size, shuffle=True ) # each batch will be a list of tensors: [batch_mnist, batch_cifar] return [mnist_loader, cifar_loader] # multiple dataloader, return as dict def train_dataloader(self): mnist = MNIST(...) cifar = CIFAR(...) mnist_loader = torch.utils.data.DataLoader( dataset=mnist, batch_size=self.batch_size, shuffle=True ) cifar_loader = torch.utils.data.DataLoader( dataset=cifar, batch_size=self.batch_size, shuffle=True ) # each batch will be a dict of tensors: {'mnist': batch_mnist, 'cifar': batch_cifar} return {'mnist': mnist_loader, 'cifar': cifar_loader}

val_dataloader¶

- DataHooks.val_dataloader()[source]

Implement one or multiple PyTorch DataLoaders for validation.

The dataloader you return will not be reloaded unless you set

reload_dataloaders_every_n_epochsto a positive integer.It’s recommended that all data downloads and preparation happen in

prepare_data().Note

Lightning adds the correct sampler for distributed and arbitrary hardware There is no need to set it yourself.

- Return type

- Returns

A

torch.utils.data.DataLoaderor a sequence of them specifying validation samples.

Examples:

def val_dataloader(self): transform = transforms.Compose([transforms.ToTensor(), transforms.Normalize((0.5,), (1.0,))]) dataset = MNIST(root='/path/to/mnist/', train=False, transform=transform, download=True) loader = torch.utils.data.DataLoader( dataset=dataset, batch_size=self.batch_size, shuffle=False ) return loader # can also return multiple dataloaders def val_dataloader(self): return [loader_a, loader_b, ..., loader_n]

Note

If you don’t need a validation dataset and a

validation_step(), you don’t need to implement this method.Note

In the case where you return multiple validation dataloaders, the

validation_step()will have an argumentdataloader_idxwhich matches the order here.

test_dataloader¶

- DataHooks.test_dataloader()[source]

Implement one or multiple PyTorch DataLoaders for testing.

The dataloader you return will not be reloaded unless you set

reload_dataloaders_every_n_epochsto a postive integer.For data processing use the following pattern:

download in

prepare_data()process and split in

setup()

However, the above are only necessary for distributed processing.

Warning

do not assign state in prepare_data

Note

Lightning adds the correct sampler for distributed and arbitrary hardware. There is no need to set it yourself.

- Return type

- Returns

A

torch.utils.data.DataLoaderor a sequence of them specifying testing samples.

Example:

def test_dataloader(self): transform = transforms.Compose([transforms.ToTensor(), transforms.Normalize((0.5,), (1.0,))]) dataset = MNIST(root='/path/to/mnist/', train=False, transform=transform, download=True) loader = torch.utils.data.DataLoader( dataset=dataset, batch_size=self.batch_size, shuffle=False ) return loader # can also return multiple dataloaders def test_dataloader(self): return [loader_a, loader_b, ..., loader_n]

Note

If you don’t need a test dataset and a

test_step(), you don’t need to implement this method.Note

In the case where you return multiple test dataloaders, the

test_step()will have an argumentdataloader_idxwhich matches the order here.

transfer_batch_to_device¶

- DataHooks.transfer_batch_to_device(batch, device, dataloader_idx)[source]

Override this hook if your

DataLoaderreturns tensors wrapped in a custom data structure.The data types listed below (and any arbitrary nesting of them) are supported out of the box:

torch.Tensoror anything that implements .to(…)torchtext.data.batch.Batch

For anything else, you need to define how the data is moved to the target device (CPU, GPU, TPU, …).

Note

This hook should only transfer the data and not modify it, nor should it move the data to any other device than the one passed in as argument (unless you know what you are doing). To check the current state of execution of this hook you can use

self.trainer.training/testing/validating/predictingso that you can add different logic as per your requirement.Note

This hook only runs on single GPU training and DDP (no data-parallel). Data-Parallel support will come in near future.

- Parameters

- Return type

- Returns

A reference to the data on the new device.

Example:

def transfer_batch_to_device(self, batch, device, dataloader_idx): if isinstance(batch, CustomBatch): # move all tensors in your custom data structure to the device batch.samples = batch.samples.to(device) batch.targets = batch.targets.to(device) elif dataloader_idx == 0: # skip device transfer for the first dataloader or anything you wish pass else: batch = super().transfer_batch_to_device(data, device) return batch

- Raises

MisconfigurationException – If using data-parallel,

Trainer(strategy='dp').

See also

move_data_to_device()apply_to_collection()

on_before_batch_transfer¶

- DataHooks.on_before_batch_transfer(batch, dataloader_idx)[source]

Override to alter or apply batch augmentations to your batch before it is transferred to the device.

Note

To check the current state of execution of this hook you can use

self.trainer.training/testing/validating/predictingso that you can add different logic as per your requirement.Note

This hook only runs on single GPU training and DDP (no data-parallel). Data-Parallel support will come in near future.

- Parameters

- Return type

- Returns

A batch of data

Example:

def on_before_batch_transfer(self, batch, dataloader_idx): batch['x'] = transforms(batch['x']) return batch

- Raises

MisconfigurationException – If using data-parallel,

Trainer(strategy='dp').

on_after_batch_transfer¶

- DataHooks.on_after_batch_transfer(batch, dataloader_idx)[source]

Override to alter or apply batch augmentations to your batch after it is transferred to the device.

Note

To check the current state of execution of this hook you can use

self.trainer.training/testing/validating/predictingso that you can add different logic as per your requirement.Note

This hook only runs on single GPU training and DDP (no data-parallel). Data-Parallel support will come in near future.

- Parameters

- Return type

- Returns

A batch of data

Example:

def on_after_batch_transfer(self, batch, dataloader_idx): batch['x'] = gpu_transforms(batch['x']) return batch

- Raises

MisconfigurationException – If using data-parallel,

Trainer(strategy='dp').

add_to_queue¶

- LightningModule.add_to_queue(queue)[source]

Appends the

trainer.callback_metricsdictionary to the given queue. To avoid issues with memory sharing, we cast the data to numpy.Deprecated since version v1.5: This method was deprecated in v1.5 in favor of DDPSpawnPlugin.add_to_queue and will be removed in v1.7.

get_from_queue¶

- LightningModule.get_from_queue(queue)[source]

Retrieve the

trainer.callback_metricsdictionary from the given queue. To preserve consistency, we cast back the data totorch.Tensor.- Parameters

queue¶ (